How Much Does AI Cost? Key Pricing Statistics to Know in 2026

Artificial intelligence spending rarely shows up as a single line item anymore. A modern rollout blends seat licenses, usage charges, add-on tools like search and retrieval, and the operational work required to keep results reliable. AI pricing can look simple on day one and still turn into a multi-line invoice once real teams start using the product at scale.

Many teams feel that gap between the promise and the bill. Leadership wants predictability, guardrails, and proof that the investment ties to business value. We’ve put actual data together to show the real picture: the AI statistics and the numbers behind today’s pricing decisions. If you’re weighing an AI rollout and need clear benchmarks to support budget planning, these figures highlight what’s shaping costs in 2026, from vendor pricing patterns to the expense drivers that often appear after launch.

Content

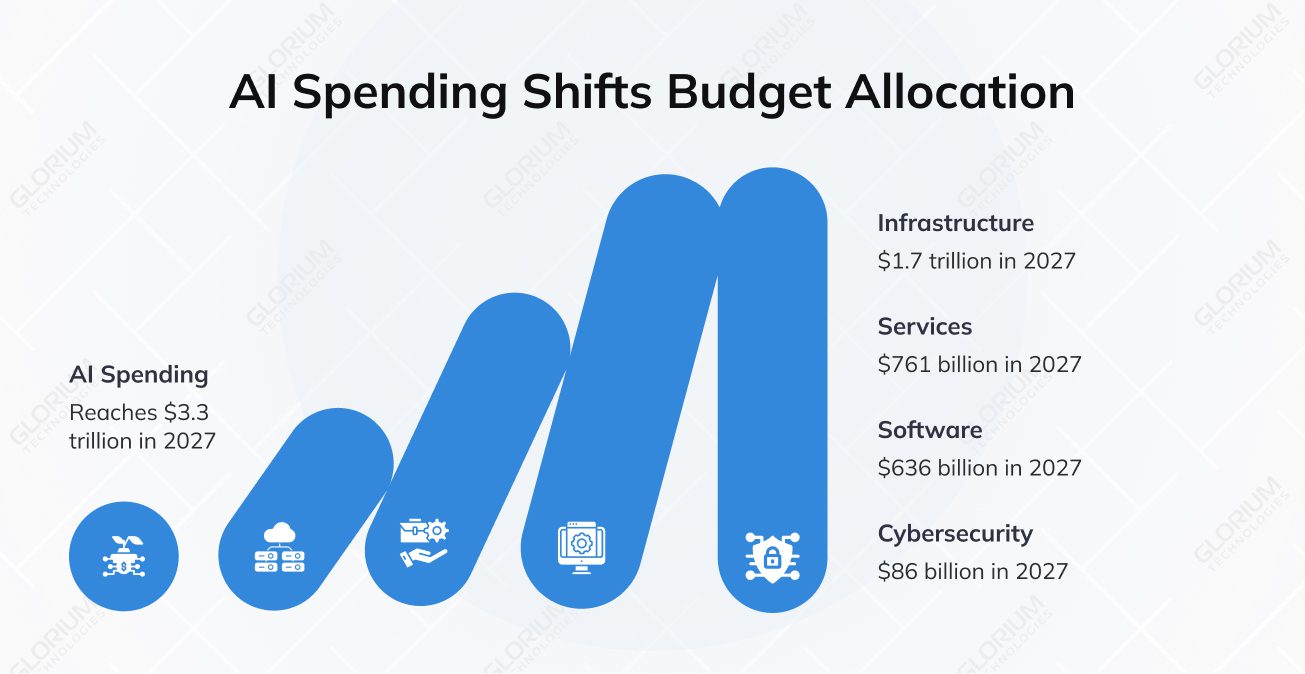

AI budgets in 2026 look very different from classic software budgets. Infrastructure, services, and security now compete with licenses for the same dollars. Spending keeps shifting toward infrastructure costs, cloud services, and computational resources, which is why AI budgets rarely behave like classic software licenses.

Cost planning also starts earlier now. Leadership wants to see where spending concentrates before choosing vendors or rollouts. The numbers below show where organizations allocate money as AI moves from experiments to core operations.

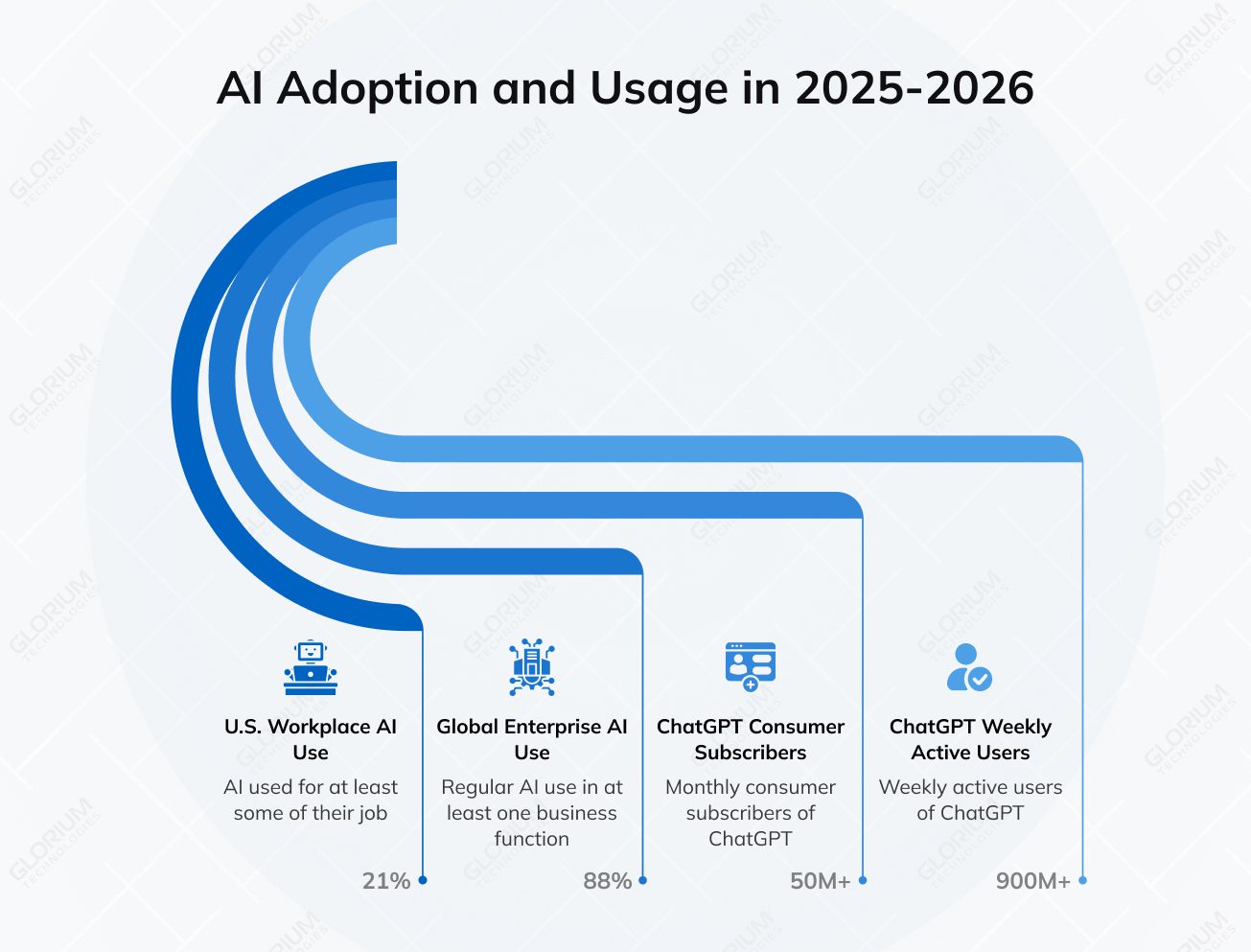

Adoption drives cost exposure more than almost any other factor. A tool that starts as a pilot can quickly become part of daily work. Usage patterns also vary by role, which makes budgeting harder without real benchmarks. The data below shows how widely AI is used and how quickly it spreads.

Unit costs keep falling, but total bills do not always follow. Cheaper inference often leads to more usage, bigger contexts, and more always-on workflows. At the same time, infrastructure demand continues to rise as teams scale. These statistics show both forces at once. They explain why AI pricing keeps adding meters and why infrastructure costs stay a budget anchor even when tokens get cheaper.

Most AI overruns come from production reality. Data preparation, data management, and ongoing maintenance often drive the maintenance costs and additional costs that teams don’t see during a pilot. Integration with existing systems, data readiness, governance, and security reviews takes time and money. Tool sprawl can also turn into spend sprawl fast.

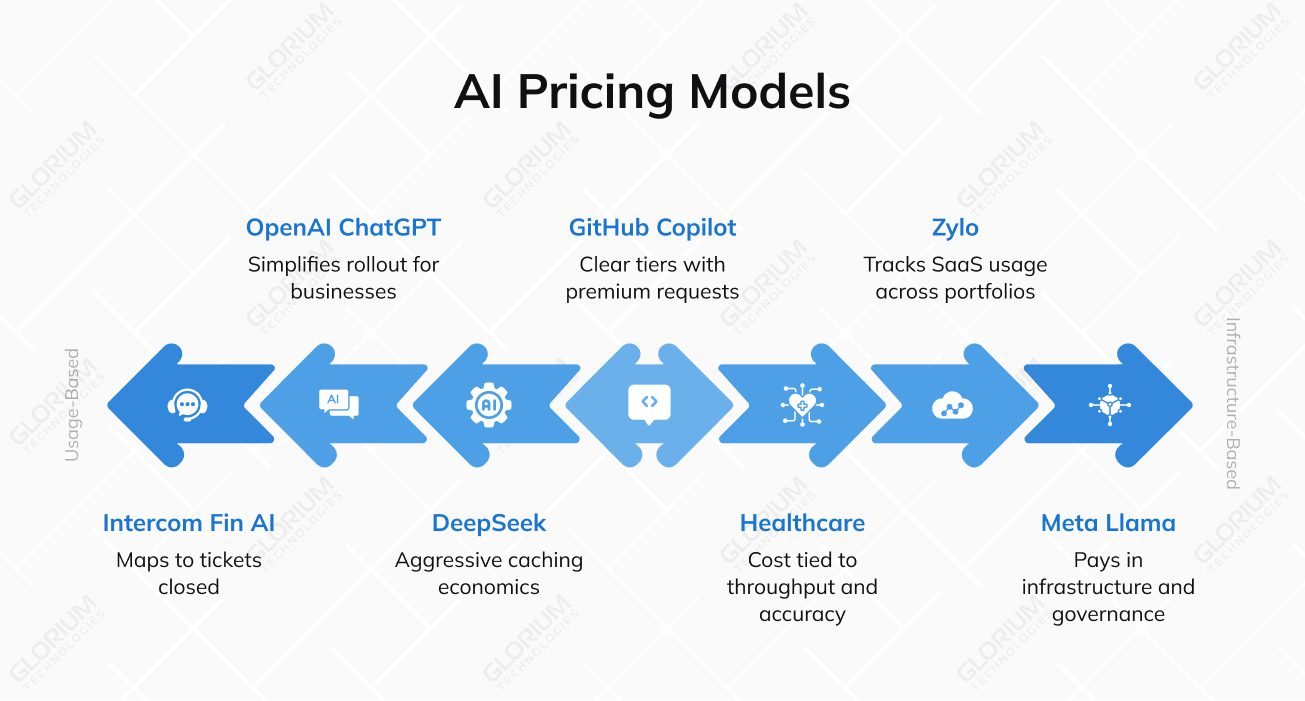

AI pricing keeps evolving because vendors and buyers need control. Seat-based pricing covers access, usage-based pricing covers demand, and outcome-based pricing ties spend to results, with hybrid pricing models becoming the default.

AI adoption is rising, but results are uneven. Cost blowups usually come from weak data foundations, unclear ownership, and risk controls that arrive too late. Teams also underestimate the operational work required to maintain AI systems over time.

Strong ROI appears when AI supports a repeatable workflow with clear metrics. Productivity gains often cluster in high-volume tasks like support, documentation, and internal knowledge work. Over time, the bigger goal is not only efficiency, but also a clear competitive advantage. Results look weaker when teams chase broad goals without a tight use case. Clear AI ROI usually comes from measurable cost savings or throughput gains, not broad automation goals.

“Businesses don’t pay for your time. They pay for outcomes. When you build an AI workflow, you’re usually saving them money, saving them time, or reducing human error.”

AI pricing now mirrors real AI costs. Market leaders increasingly sell AI software as a mix of off-the-shelf solutions and metered add-ons, even when the core product feels like a standard subscription. That model often separates basic access from premium AI capabilities.

GitHub sells Copilot as a developer add-on with clear tiers. The “seat” gets you in. Then premium requests meter higher-cost features like chat and agents. Copilot Business is $19/seat/month, with 300 premium requests/user/month, and $0.04 per extra request.

Intercom comes from customer messaging and support workflows. Its Fin AI Agent pricing is outcome-like: $0.99 per resolution. This model fits support leaders because it maps to tickets closed, not tokens burned.

OpenAI runs two pricing worlds side by side. ChatGPT business plans price per user per month, which simplifies rollout. The API side meters usage and adds line items for tools and storage, like $0.10/GB/day vector storage and $2.50 per 1k File Search tool calls.

DeepSeek competes hard on raw API cost. It uses aggressive caching economics: $0.028 per 1M input tokens (cache hit) vs $0.28 (cache miss), plus $0.42 per 1M output tokens. That pushes teams to reuse stable prompt prefixes and long contexts.

Meta’s Llama is “open-weights,” so you don’t pay per call to a hosted API. Instead, you pay in infrastructure, MLOps, and governance to run it yourself. Meta says Llama has reached 1B downloads, which signals how real this feature is for enterprises.

Omega Healthcare uses AI to automate high-volume admin work in revenue cycle management. Business Insider reports 15,000 hours saved per month, 40% less documentation time, 99.5% accuracy, and 30% ROI for clients. This is the “pricing proof” many buyers want: cost tied to throughput and accuracy outcomes.

Zylo sits on the spend-management side. It tracks SaaS usage across large portfolios, using data from 30M licenses and $34B in SaaS spend under management. This matters for AI because tool sprawl can turn “a few pilots” into hundreds of subscriptions in a hurry.

When you need a partner to design, build, and ship real AI products, Glorium Technologies can support you end-to-end, from product discovery and prototyping to rollout. Delivery usually includes AI strategy, AI integration with existing systems, and iterative development led by AI developers, data scientists, and machine learning engineers. Teams work with you to choose the right approach for your use case, whether that means integrating third-party AI software, building custom AI development paths, or delivering advanced AI solutions that fit your existing systems and security requirements.

For instance, recently we worked on the U.S. durable medical equipment provider project. The client needed a better way to handle appointment volume, reduce support calls, and limit the impact of no-shows. Glorium Technologies built a custom AI agent for patient scheduling using generative AI and predictive analytics, cutting support calls by 55% and reducing the negative impact of no-shows by 73%. Patients also gained 24/7 scheduling, more personalized communication, and a simpler scheduling experience. These results are achievable with a professional development team.

Want to bring AI to your company? Let’s talk. Book a quick intro call to discuss your goals, constraints, and a realistic plan for moving forward.

Ask for a sample invoice based on your workflows. Get a written list of every billable unit that can drive AI costs, including tokens, tool calls, storage, agent runs, higher-tier requests, and any “premium” features tied to specific AI models.

Then push on what changes the bill over time. Ask how caps and overages work, whether hard limits are possible, how often prices can change, and what happens when models get upgraded or replaced. Clear answers upfront reduce the hidden costs that show up after rollout.

Costs rise when usage expands beyond a small test group and begins to touch real volume across teams. Production also forces more guardrails, more monitoring, and more integration with existing systems, which adds maintenance and operational costs.

Quality requirements increase, too. Teams often add retrieval, longer context, and extra validation to reduce errors in GenAI outputs, which increases usage and the overall cost of AI as adoption grows.

Glorium Technologies helps you budget effectively by tying pricing to real workflows and setting cost guardrails early. That includes limits for high-cost actions, usage monitoring that finance can track, and design choices that keep AI pricing predictable as adoption expands.

Work also focuses on reducing surprise spend from tooling and operations. A clear cost model, a staged rollout, and ongoing optimization keep AI investment aligned with business value rather than uncontrolled use.

Seat-based pricing charges per user, which makes budgeting simple and works well when usage stays steady across teams. Usage-based pricing charges for consumption, such as tokens, tool calls, time, or outcomes, which fit workloads where demand varies and spikes.

Hybrid pricing is common in AI software today. A base seat grants access, while heavy usage is metered, keeping costs under control without blocking adoption.

A reliable budget for off-the-shelf tools starts with roles. Light users need basic access, while power users drive high costs through frequent use, long context, and automation, so tiered limits work better than a single limit for everyone.

Add a shared budget for platform overhead like storage, monitoring, and ongoing maintenance, then adjust after a short rollout based on real usage. That approach answers the question “how much does AI cost” with data rather than guesswork.

Model training makes sense when pre-trained AI models keep failing in predictable ways that prompts and retrieval can’t fix, especially in natural language processing workflows with strict accuracy or formatting requirements. It also becomes more cost-effective for AI systems with steady, high-volume workloads, where a smaller tuned model can reduce AI costs and improve reliability. Many AI projects reach that point after an AI prototype proves value, and teams see consistent gaps that only custom model training can address.

Pre-trained models are usually the right starting point when speed matters and budgeting is a priority during AI implementation. They keep the AI software development process simpler and reduce development time, since teams can focus on data preparation, AI integration with existing systems, and guardrails instead of training. Model training increases total costs through training, infrastructure, and ongoing maintenance, including the upkeep of AI systems over time. For many AI initiatives, the best path is to start with pre-trained models, measure business value, and move to model training only when the economics and quality targets clearly justify the added AI development cost.

A realistic estimate treats AI as a system cost, not a tool price. Artificial intelligence costs usually combine AI pricing from vendors, infrastructure costs from cloud services, and the development costs of integrating AI with existing systems. Start with two or three workflows, map what drives AI costs in each one, and use that to budget effectively across rollout, support, and cost management.

The full number should include the development process. AI implementation often adds costs for security, monitoring, and rollout support, and those ongoing costs can grow as usage increases. A solid estimate separates one-time setup from monthly run rates, so the AI investment reflects real operating conditions.

Hidden costs usually come from data and operations. Data preparation and data management often expand once teams realize high-quality data is needed for stable results, especially in generative AI, natural language processing, and customer-facing virtual assistants. AI technology may look simple in a pilot, but production use exposes gaps in workflows, permissions, and review processes.

Ongoing maintenance adds another layer. Teams often underestimate the work required from AI developers, data scientists, and machine learning engineers once models are live. Monitoring, evaluations, support, retraining, and fixes tied to changing requirements all add maintenance effort, even when the initial AI service looked cost-effective.