AI Document Processing: The 2026 Implementation Guide for Enterprises

Manual document processing is one of those problems that looks manageable until you actually measure it. Approval cycles stretch nearly three weeks. Invoice handling costs run up to $40 per document. Multiply those numbers across your monthly volumes, and the picture changes quickly.

AI document processing is the fix. It takes in unstructured business documents in any format, classifies them, extracts the relevant fields, validates the data, and routes clean, structured output directly into your systems without manual keying or routing delays.

This guide covers how intelligent document processing (IDP) works, what it costs, where it delivers the clearest return, and what separates successful deployments from the ones that stall.

Content

Intelligent document processing is a system that converts unstructured business documents into structured, ready-to-use data using OCR, natural language processing (NLP), and machine learning. When a business receives an invoice, contract, or claim form, the system classifies the document type, uses computer vision and NLP to extract key information and key value pairs, validates the document data, and routes usable data into downstream workflows, automating document-driven processes end to end and eliminating the need for manual data capture.

Valued at $3.22 billion in 2025, the intelligent document processing market is projected to reach $43.92 billion by 2034, growing at a 33.68% CAGR. 88% of organizations now report regular AI use in at least one business function, and 78% of enterprises use AI specifically for document processing. Among those, 66% of new IDP projects replace first-generation systems rather than building from scratch. Yet 61% of IDP processes still include paper documents, and 48% of organizations expect paper volumes to rise. The technology has crossed from experimental to mainstream.

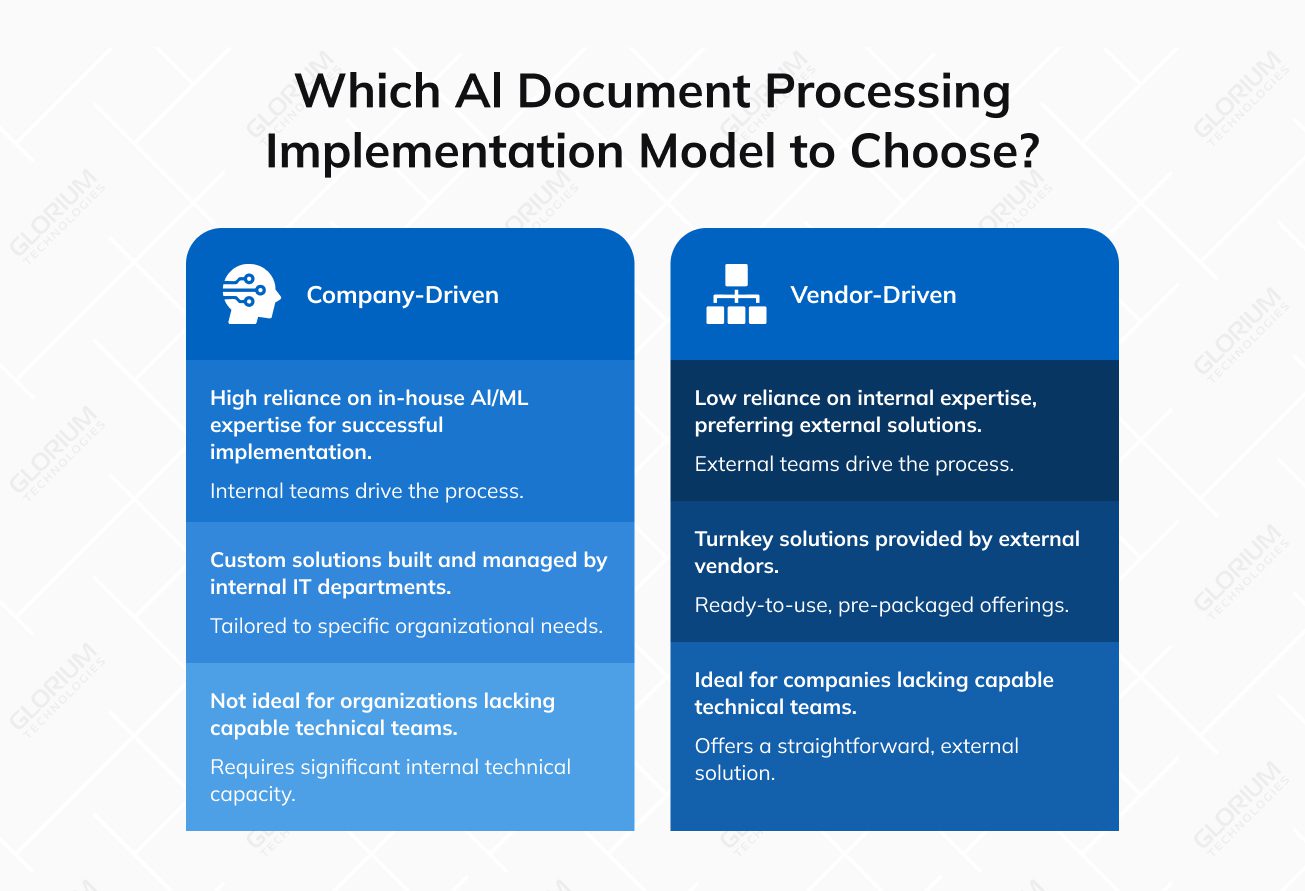

Two implementation models dominate. Company-driven implementations rely on internal IT departments, sometimes with external consultants, and work best when the organization has AI/ML expertise in-house. Vendor-driven implementations occur when companies lack capable technical teams and prefer turnkey solutions. In either model, keeping the initial scope narrow and confirming data readiness before expanding is what separates success from failure.

Businesses that delay AI-optimized document processing face compounding competitive disadvantage as the market matures. The core drivers are volume, speed, errors, and cost. And regulatory pressure accelerates all four.

Document volumes are growing while processing expectations tighten. Organizations processing thousands of documents monthly face a straightforward calculus: in supply chain operations alone, manual processing carries a 5-10% error rate and adds 25% longer processing cycles compared to automated workflows. Gartner predicts that 60% of AI projects unsupported by AI-ready data will be abandoned through 2026, making data quality the primary success factor.

Compliance obligations compound the urgency. Under the EU AI Act, compliance spans six dimensions: transparency, accountability, safety, fairness, privacy, and human oversight. Your organization’s obligations hinge on document volume, industry regulation, data sensitivity, and how you process information. Implementation timelines range from three to six months for targeted deployments, extending to eighteen months for enterprise-wide rollouts.

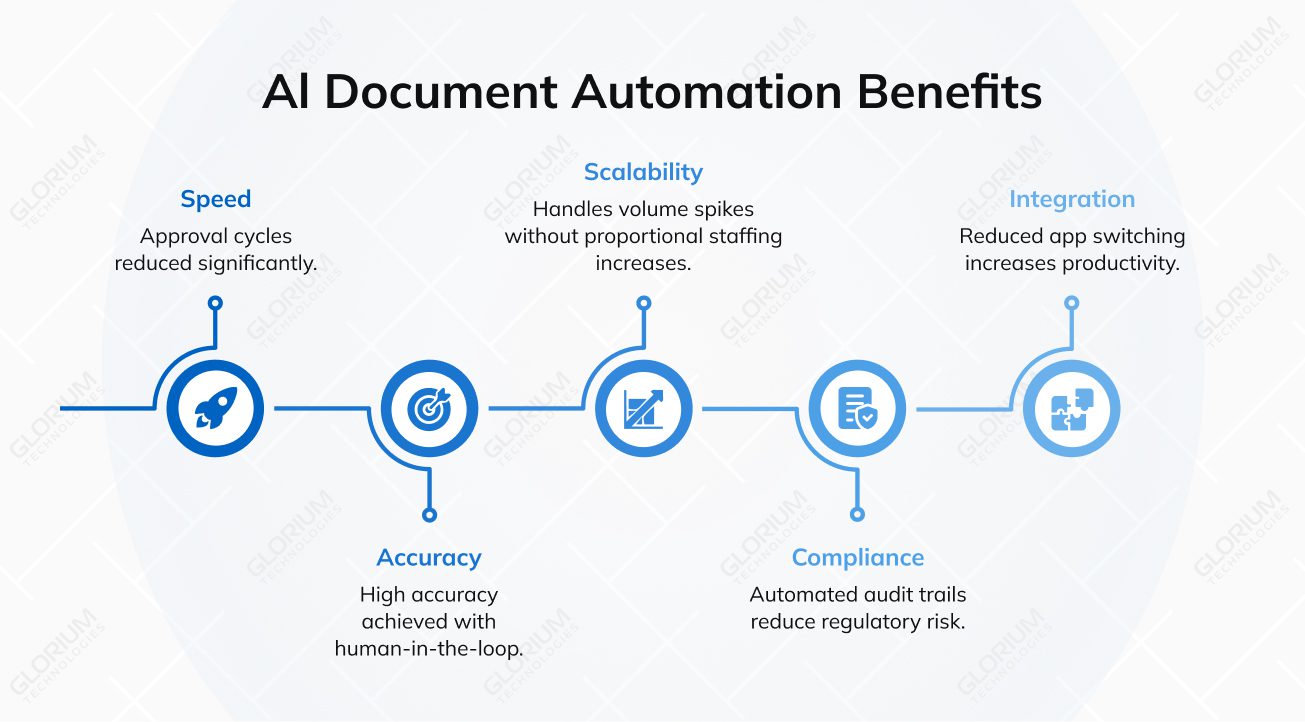

A typical benefit realization cycle has seven stages: needs assessment, vendor evaluation, pilot deployment, data pipeline configuration, workflow integration, staff training, and full production. Beyond this, the strategic advantages include:

For business users, the impact is visible immediately: automated processing handles managing large volumes of business documents without proportional staffing increases and frees teams to focus on higher-value work. If done right, IDP helps organizations enhance operational efficiency across document-driven processes.

Each technology layer in AI-optimized document processing solves a distinct problem, and understanding their roles prevents over-investment in the wrong component.

Traditional optical character recognition (OCR) achieves 95-98% accuracy on clean, printed text, but drops to 40-60% on complex document formats. AI-based visual processing achieves 67% accuracy on those same complex formats and reduces handwritten text error rates from 15-20% to 5-10%. What matters most is that a 2% character-level OCR error rate compounds through post-processing steps into 15-20% information extraction errors in production. That means one in five documents requires human intervention, even with nominally high-accuracy systems.

NLP handles document classification, entity extraction, and contextual understanding. It turns raw text into structured fields. Machine learning models improve over time as they process more documents, but they require accurate, up-to-date training datasets to perform effectively. For a machine learning engineer to work effectively, you need to provide well-prepared data with clear classification labels, extraction fields, and validation rules.

In practice: A/B test your model configurations systematically. Recruit data scientists and domain experts who can liaise between end users and stakeholders so that training data reflects real document variability.

“There is no perfect document processing pipeline — you will probably need a lot of time to observe and go through visual inspections of different documents you’re going to work with for your specific systems.”

Venelin Valkov, Machine Learning Engineer

AI document processing delivers the highest ROI in industries with high document volumes, strict compliance requirements, and costly error consequences.

A mid-sized commercial bank will process hundreds of thousands of loan files, KYC packets, trade confirmations, and regulatory filings in a year, and any one of them can cause real compliance trouble if a clause gets missed. Legal and analyst hours to review this material are expensive, so the ROI math tends to work out quickly.

JP Morgan’s COIN platform is the example most people still cite. The bank rolled it out back in 2017 to review commercial loan agreements, and the system chews through 12,000 contracts in seconds, replacing work that used to eat up roughly 360,000 legal hours a year. Peer institutions have followed the same recipe: pull out the clauses, flag anything unusual, and route the rest without a human touching it. What’s really shifted in the last couple of years is the underlying tech. Template-based OCR would break the moment a document didn’t match the sample. LLM-assisted extraction handles amendments, oddball addenda, and marked-up scanned pages from older portfolios without falling over.

Government agencies are buried in paper, and legal retention rules mean they can’t just shred it. Benefits applications, immigration files, tax returns, and records requests all have to be read, sorted, and tied back to a specific citizen record. Staffing shortages haven’t helped. Neither have rising public expectations. People now compare their DMV experience to their Amazon experience, fairly or not. AI document processing gives agencies a way to work through the backlog without hiring at a pace they couldn’t actually sustain, and newer platforms combine image cleanup, OCR, and generative extraction in one pipeline. That’s what finally made decades-old microfilm and scanned TIFF archives workable.

The Social Security Administration is one of the more public examples. The agency signed an $81 million deal to process 250 million retiree and survivors’ benefits documents, hit FedRAMP High authorization, and, in some workflows, cut citizen response times by something close to 99%. States are rolling out similar systems for unemployment claims, and federal tax authorities are headed in the same direction. A handful of state DMVs have also started using AI document processing for Real ID rollouts, which turns what used to be two or three in-person visits into a single digital submission.

Healthcare is probably where document quality has the most direct line to revenue. Every claim, prior authorization, clinical note, lab report, and intake form feeds the revenue cycle somewhere, and errors downstream turn into denials or delayed payments. Clinician burnout from documentation workload sits on top of all of it, which means the problem has both financial and workforce dimensions. AI document processing pulls clinical and administrative data out automatically, so claims go out clean the first time. Platforms built for this industry handle the HIPAA side, plug into EHRs through FHIR or HL7, and keep an audit trail for every record they touch.

The numbers are rough. 41% of healthcare organizations now say 10% or more of their claims get denied, compared to 30% back in 2022. Clinical documentation issues cost around $1 million per organization each year, and that’s before counting the appeals work piled on top. Glorium Technologies’ healthcare software development team has worked with payers and providers on exactly this kind of workflow, trimming manual coding hours and getting AI extraction running inside existing EHR and revenue cycle systems without disrupting clinical operations.

Manufacturing is where the ROI math on document processing is hardest to argue with. Every invoice, bill of lading, packing slip, and certificate of analysis is a transaction that needs to line up with a purchase order, an ERP record, and a shipment. Miskey any of it and the consequences show up fast: a container sitting in customs, a late fee to a carrier, or a production line running short on components because the parts order got entered wrong. Automated intake keeps the physical movement of goods and the paper trail in sync, which is why document processing usually comes up alongside broader ERP modernization work.

Platform selection requires evaluation against your specific document types, integration needs, and compliance requirements. Gartner published its first-ever Magic Quadrant for IDP Solutions in 2025 after evaluating over 100 vendors. That’s useful market validation, but it also means the choice is harder than it was three years ago, when fewer credible options existed.

Three dimensions should drive the process: AI capabilities the platform actually offers versus what you need, integration potential and fit with existing systems, and total cost of ownership, including ongoing maintenance. Look for third-party assessments rather than vendor claims alone.

A study from AIIM found that smaller businesses benefit more from IDP cross-functionality than large enterprises do. A platform architected for millions of documents per month can be operationally heavy to run if your volumes don’t justify it. Match the platform to your actual scale, not the scale you’re hoping to reach.

GenAI is becoming an equalizer that challenges vendors’ ability to stand out on accuracy benchmarks alone. Integration capability and workflow orchestration are the real differentiators now — how cleanly a platform connects to your existing enterprise systems matters more than headline accuracy numbers.

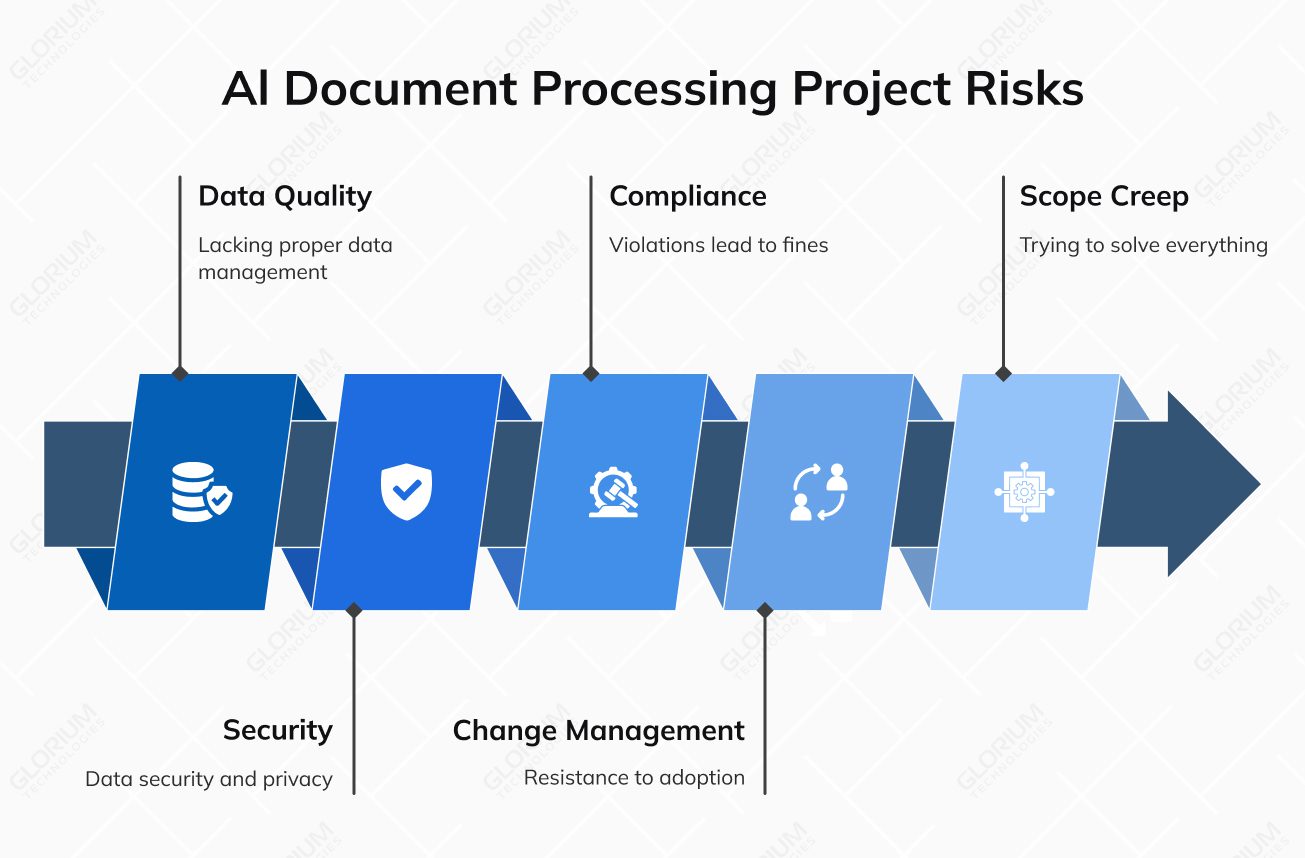

Data readiness, not model quality, is the primary reason intelligent document processing projects fail. Gartner predicts that 30% of generative AI projects will be abandoned after proof of concept due to poor data quality, inadequate risk controls, escalating costs, or unclear business value.

When presenting data readiness importance to management, focus on the bottom-line impact.

Key risk areas include:

Independent consultants may be more proficient at aligning AI capabilities to business processes due to focused expertise. Platform vendors have intimate system knowledge but might lack a broader implementation context. Define required capabilities rather than desired features, and pin down the total cost of ownership, including ongoing model maintenance.

Nobody gets to full automation in a single release. The realistic timeline is usually a few years, not a few quarters, and the teams that get there tend to pick a painful document type, automate it well, then expand from there. Here are some of the best practices:

Agentic AI is reshaping document processing architecture. Traditional setups handle one task in isolation (extract an invoice, classify a contract) and hand off to a human for whatever comes next. Agentic AI changes that by letting the system read the document, pull context from connected tools like the ERP, take the next action on its own, and only escalate when confidence drops. By 2028, Gartner predicts that 33% of enterprise software applications will include agentic AI. Organizations investing early in agentic AI position themselves to benefit from compounding returns as the technology matures. Here are the main trends to track:

The common thread across all of these is sequencing. Start with the document types that run in high volume and hold up approvals for days at a time. Those are the workflows where agentic AI, cloud-native infrastructure, and continuous learning compound fastest, and where organizational buy-in is easiest to maintain because the business impact shows up quickly.

AI-powered document processing done well is a long-term initiative. Done poorly, it’s a proof of concept that gets quietly shelved after six months because the data wasn’t ready, the scope was too broad, or nobody defined what success actually looked like before the build started.

Glorium Technologies brings years of AI software development and machine learning engineering expertise across healthcare, finance, manufacturing, and enterprise operations. Our AI consulting services cover everything from data readiness assessment through to deployment and ongoing model maintenance. The operational foundation that keeps it working.

Wondering what it looks like in real life? An HME/DME provider came to us needing to automate its billing and document workflows across a complex three-payer system involving patients, insurance companies, and direct customers. We built the platform from scratch — automated billing, secure payment integration, invoice processing, and a cloud-based reporting system covering financials, inventory, and patient records across the entire revenue cycle. The business went paperless. Payment times dropped. Administrative setbacks that had previously required manual intervention were eliminated.

Our data science consulting team handles the pieces most organizations underestimate: training data preparation, extraction pipeline configuration, and the governance structures that keep document processing accurate well after go-live.

We follow three rules on every engagement:

Contact us to talk through what implementation realistically looks like for your document types and systems. We’ll help you scope it right.

Timelines range from 2–3 months for basic systems handling structured documents to 10–18 months for enterprise intelligent document processing platforms that include optical character recognition, natural language processing, document classification, and full workflow orchestration across business systems. The scope of your document-centric business processes is the biggest driver of that range. A phased approach, piloting on one document type first and then expanding, consistently outperforms big-bang deployments, which account for the majority of the ~40% of implementations that miss their initial ROI projections.

What you pay depends on a handful of things. How much you need to customize the AI model for your specific document formats matters a lot. So does whether you deploy in the cloud or on-premise, how wide the rollout is, and all the secondary costs that rarely show up in the first quote: pulling data out of legacy systems, cleaning it, training end users on the new workflows. Most teams also underestimate the productivity hit during the transition itself, which is real and should be budgeted for. On the low end, basic systems that handle data extraction from structured documents run $15,000 to $40,000. On the high end, enterprise IDP platforms that cover unstructured and semi-structured documents and integrate deeply with your ERP or CRM can land anywhere from $400,000 to well over $1,500,000.

Yes, intelligent document processing spans the full technology stack: from ingestion and preprocessing of scanned documents and digital documents, through OCR and machine learning model inference, to data validation and data integration into your existing enterprise systems. A well-architected IDP solution pushes structured data, key-value pairs, and transactional data directly into your ERP or CRM, eliminating redundant data entry and keeping financial documents and operational records accurate across every platform. Neglecting any layer of that stack creates a new weak link for errors across the entire business process.

Out-of-the-box document AI models handle standardized formats reasonably well, but most real-world business documents aren’t standardized. Industry-specific layouts, scanned images with printed and handwritten text, and documents with specialized terminology all introduce variability that general models weren’t built for. Custom training on your own labeled data, covering your specific document types, document formats, and extraction targets, is what delivers materially better data accuracy for use cases like invoice processing, document analysis, and document classification across complex or unstructured documents. Without it, your machine learning models are working from guesswork on inputs they’ve never seen.

No intelligent document processing system achieves perfect accuracy on every document type, especially with free-form documents, low-quality scanned images, or sensitive data in non-standard layouts. Human-in-the-loop workflows address this by automatically routing low-confidence extractions to human reviewers before the data reaches your business systems — vendor-reported benchmarks show this approach achieving 99.9% accuracy at scale. A data steward monitors processing quality, manages exceptions, and enforces data governance standards across document pipelines day to day. Strong human oversight combined with robust data validation is what makes automated document processing reliable enough to trust across high-volume, document-driven business processes.

Data extraction refers to pulling specific key value pairs and fields from business documents, invoice numbers, dates, and totals, and converting them into structured data that your systems can act on. Document analysis is the broader upstream step: understanding the document’s structure, type, and context before any data extraction begins. Together, they form the core of how intelligent document processing (IDP) converts unstructured data, embedded in scanned documents, digital form submissions, and free-form documents, into usable data. Document AI platforms handle both steps automatically, but IDP solutions differ significantly in how well they handle edge cases and non-standard document formats.