The State of AI in Healthcare: Adoption, Use Cases, and What Comes Next in 2026

Over the past three years, healthcare artificial intelligence has slipped out of the demo stage and into core clinical and administrative infrastructure. By the end of 2025, roughly half of US nonfederal acute care hospitals had implemented generative AI in some form, up from 25% in late 2023, according to McKinsey research. Regulators are keeping pace. The FDA cleared 295 AI/ML-enabled medical devices in 2025, a record year and a clear signal that approval pathways are maturing.

This article is for healthcare leaders, IT teams, clinical innovators, and product owners deciding where to invest next. It covers the state of AI adoption across the sector, the use cases that are actually moving the needle, the regulatory and data hurdles that still slow rollouts, and the trends worth tracking through 2026.

Content

Three shifts make 2026 a meaningful inflection point. The first is technological: large language models and multimodal systems have reached a level of reliability that clinicians are willing to trust for narrow, well-scoped tasks like ambient scribing and imaging triage. The second is regulatory: the FDA’s Predetermined Change Control Plan (PCCP) framework, finalized in December 2024, lets AI/ML devices update post-market within defined boundaries, which removes a major planning obstacle for device makers. The third is financial: according to a McKinsey survey, 82% of industry leaders now expect positive ROI from their AI programs, compared to near-universal uncertainty a few years ago.

Adoption is also broader than most executives realize. Large academic medical centers are no longer the only ones running production AI. Independent clinics, diagnostic labs, payers, pharma companies, and public health agencies all have something in flight, even if many remain cautious about clinical decision support. The point is no longer whether a hospital will use AI, but how quickly it can stand up the data governance, clinical workflows, and compliance checkpoints to do so responsibly.

That shift changes how the conversation sounds in the boardroom. Three years ago, the question was: “Should we experiment with AI?” In 2026, it is usually: “Which three use cases give us measurable returns inside twelve months, and who is going to help us integrate them into our EHR without breaking anything?”

“Healthcare AI will not work out of the box. It needs clinical expertise, workflow alignment, and continuous iteration to become useful in real care settings.”

Dr. Amy Bot, Chief Innovation Officer at the American College of Cardiology

Adoption varies sharply by segment. According to Menlo Ventures’ 2025 healthcare AI research, 22% of healthcare organizations have implemented domain-specific AI tools, a 7x increase over 2024. Health systems lead at 27% adoption, outpatient providers follow at 18%, and payers sit at 14%. Spending follows the same curve: of roughly $1.4 billion flowing into healthcare-specific generative AI, providers account for $1 billion, while payers contribute just $50 million.

Pharma looks different at the market-sizing level. According to Grand View Research, pharmaceutical and biotechnology companies held the largest revenue share of any end-user segment in 2025, with around 80% of life sciences professionals using AI in drug discovery. Diagnostic labs and imaging centers are the fastest-growing end-user segment. Providers have largely pushed past the “death by pilot” pattern, while payer buying cycles are actually lengthening, from 9.4 to 11.3 months. The gap between production deployments and pilots usually comes down to three things: clean clinical data, a physician champion at the department level, and early regulatory fluency.

Regulators are no longer the rate-limiting step. The FDA cleared 295 AI/ML medical devices in 2025, with a median clearance time of 142 days, and its Predetermined Change Control Plan framework now lets devices update post-market within defined boundaries. The EU AI Act entered force on August 1, 2024, classifying CE-marked AI medical devices as high-risk, with full compliance required by August 2027. Geography reflects all of this. North America held 44.5% of the global AI in healthcare market in 2025, supported by a deep digital health infrastructure and a regulatory environment that prioritizes speed. Europe runs more cautiously, with healthcare-specific deployment lagging sectors like finance. Asia Pacific is forecast to be the fastest-growing region through 2030, with hospitals in India, Vietnam, and the Middle East skipping legacy infrastructure entirely and government-funded programs in Saudi Arabia, the UAE, and Singapore investing in national AI in healthcare strategies.

Certain names come up repeatedly when healthcare organizations discuss advanced AI deployments. Kaiser Permanente’s rollout of Abridge ambient scribing spans 40 hospitals across eight states. Mass General Brigham reported a 21.2% drop in physician burnout prevalence after 84 days of ambient AI use. Mayo Clinic deployed NVIDIA Blackwell infrastructure to power generative AI solutions at scale, positioning itself as the first major health system to run a hospital-grade supercomputer for AI-accelerated diagnosis.

What do these organizations have in common beyond deep pockets? A clear internal governance structure, a named clinical leader for each AI initiative, and a willingness to publish results honestly, including when models fall short. Institutions looking to follow their path do well to replicate the structure before worrying about tooling.

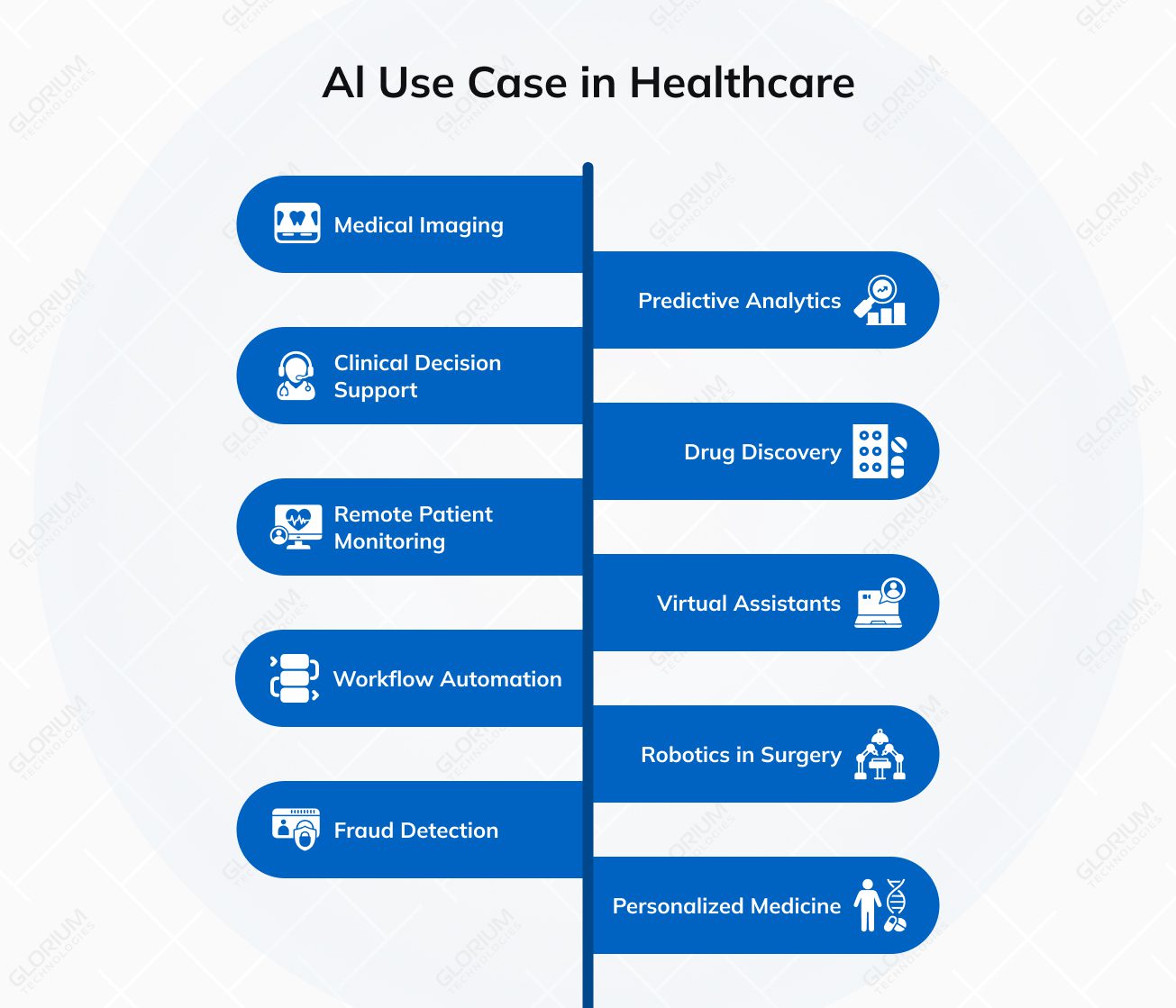

The number of healthcare AI use cases has matured since 2023. Some categories are fully operational, others are scaling quickly, and a third group is still finding its footing. Each of the following sub-sections covers a specific application area where measurable value is being produced today.

Imaging is the most mature clinical AI application by a wide margin. Roughly 76% of all FDA-cleared AI/ML medical devices are radiology-focused, according to recent analyses of the FDA public registry. Models trained on X-rays, CT scans, MRIs, and mammograms now routinely match or exceed specialist performance on narrow detection tasks, especially in oncology screening, stroke triage, and fracture identification.

The practical impact shows up in throughput. Imaging departments using AI as a second reader typically process more cases per hour and catch incidental findings that manual review misses on busy days.

Predictive models forecast deterioration, readmission, sepsis onset, and disease progression using data already sitting in the EHR. Epic’s Deterioration Index, validated for sepsis patients, is one of the most widely deployed examples. RWJBarnabas Health reported a 15% reduction in mortality using Epic’s predictive deterioration tool, the kind of result that cements the case for clinical decision support inside the medical record.

Clinical decision support pushes relevant guidelines, contraindications, and treatment suggestions to physicians at the point of care. Unlike earlier rule-based systems that produced alert fatigue, current generation tools use patient-specific context to reduce noise. Sutter Health’s native integration of AI decision support into Epic workflows illustrates the pattern: recommendations surface only when relevant and can be dismissed with one click when they are not.

Pharmaceutical companies use AI to identify promising compounds, predict molecular behavior, and screen candidate drugs faster than traditional bench work allows. Insilico Medicine became the first firm to complete Phase 2a trials on a generative AI-discovered drug with positive efficacy signals. Industry trackers put AI-designed drugs at significantly higher Phase I success rates than the historical industry norm, though attrition in later phases still applies.

Wearable devices and connected home sensors feed real-time data into AI systems that flag anomalies before they become emergencies. Chronic disease management, cardiac monitoring, and post-surgical recovery are the three areas where remote patient monitoring has produced the clearest returns, especially for Medicare populations, where reducing readmissions directly improves payer economics. Glorium Technologies’ work on IoT development and medical device software has intersected regularly with remote monitoring projects of this type.

AI-powered chatbots now handle symptom intake, appointment scheduling, medication reminders, and post-discharge follow-up at scale, often integrated directly into patient portal software. The tools work best when scoped to specific tasks rather than positioned as general “medical advice” assistants. Clinical practices that deploy them for triage and scheduling see call volume drop by 30% or more, freeing front-desk staff for higher-value work.

Administrative automation represents the highest-rated physician use case for AI. According to AMA survey data, 57% of physicians believe AI tools are relevant for billing codes, medical charts, and visit notes. Revenue cycle management is the prime example: AI automates claims submission, denial management, coding validation, and payment posting, often as part of a broader hospital management software deployment. Fox Valley Orthopedics reported a 25% reduction in claim denials and a 5.7x ROI on their AI investment, the kind of measurable return that makes the business case for cost-conscious finance teams.

Robotic surgery systems have existed for two decades, but AI is now adding real-time intraoperative guidance, tissue recognition, and outcome prediction to the existing systems. Post-operative outcome tracking, powered by vision models, helps surgeons refine technique based on measurable results rather than case memory alone.

Payers and integrated delivery networks use AI to spot irregular billing patterns, duplicate claims, and compliance risks across large transaction volumes. The same systems help with prior authorization workflows, where Medicaid services and commercial payers both face pressure to respond faster.

AI pulls genetic data, lifestyle factors, medication history, and prior treatment responses into a single profile that informs individualized treatment planning. Oncology is the leading edge, with tumor boards regularly using AI to match patients to targeted therapies and clinical trials. The broader challenge is integrating genomic data with electronic health records in a way that clinicians can actually act on during a visit.

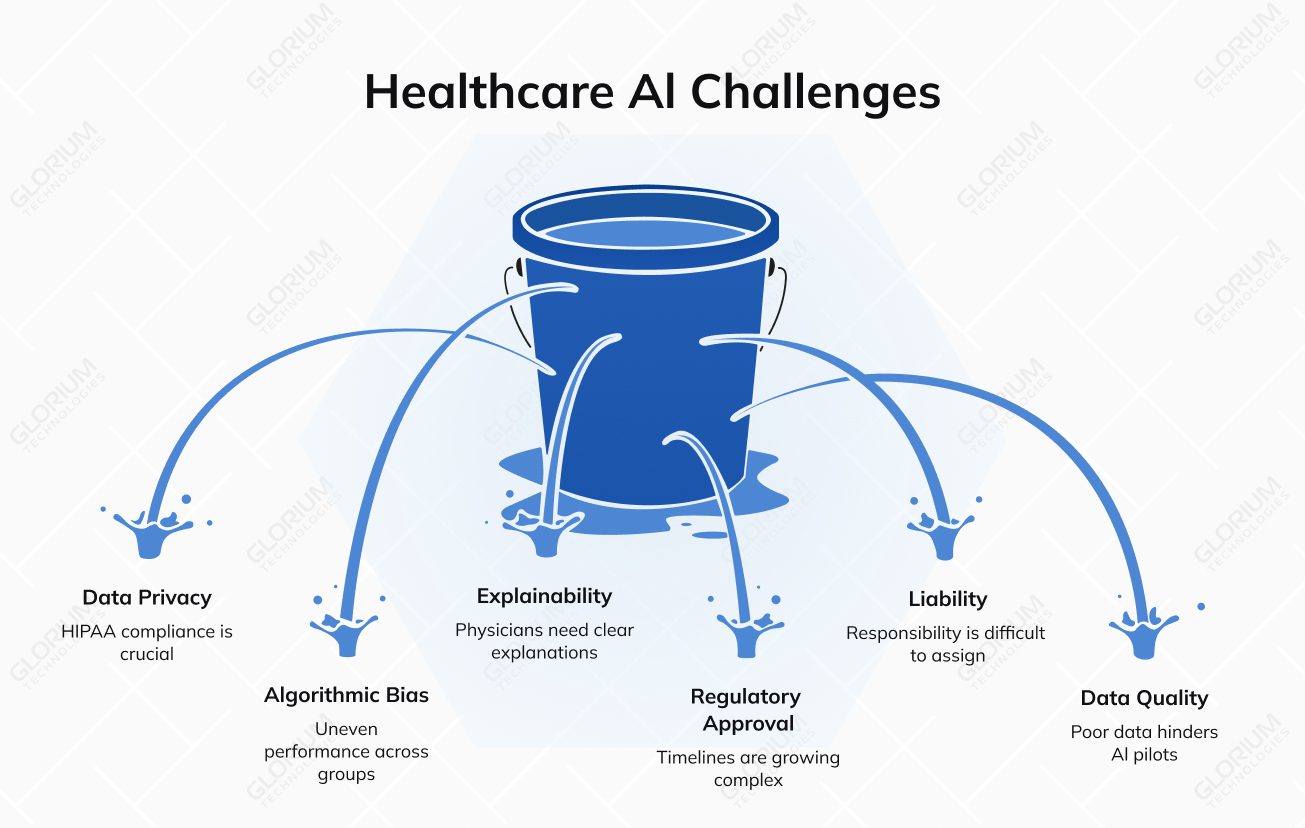

Rapid adoption has not removed the harder questions around healthcare AI. In many cases, it has made them more urgent.

Training AI on patient data requires clear consent logic, de-identification, access controls, and audit trails. HIPAA enforcement already shows how expensive mishandled patient data can become, with OCR resolution agreements reaching multimillion-dollar settlements in past cases. Federated learning is one practical response because it allows several organizations to improve models without moving raw patient records between systems.

Gaps in training data can lead to uneven model performance across patient groups. One widely cited 2019 study found that correcting bias in a population health algorithm would increase the share of black patients identified for additional care from 17.7% to 46.5%. The issue was not only technical. The model used healthcare costs as a proxy for medical need, which reflected existing access disparities. That is why healthcare AI governance now needs active monitoring for disparate performance across demographic groups.

A 2024 FDA review and a UK government-commissioned independent review confirmed that pulse oximeters overestimate blood oxygen saturation in patients with darker skin, and any AI model that ingests pulse oximetry data inherits that measurement bias. Stanford’s Diverse Dermatology Images dataset showed that dermatology AI models drop by 29–40% in ROC-AUC when evaluated on darker skin tones, since most public training datasets, including the widely used ISIC collection, are more than 70% lighter-skinned. Warfarin dosing algorithms built on predominantly white European cohorts have been documented to overdose African American patients because the underlying genetic variants behave differently in that population.

Physicians are unlikely to rely on AI recommendations that they cannot explain to a colleague, patient, or compliance team. FDA, Health Canada, and MHRA transparency principles for machine learning-enabled medical devices emphasize that users need clear information about intended use, risks, limitations, and performance. In practice, clinical AI products increasingly need interpretable outputs, confidence scoring, and clear escalation rules.

Regulation is moving alongside the market. The FDA maintains an official list of AI-enabled medical devices authorized for marketing in the United States, showing how quickly AI is becoming part of regulated healthcare products. In the EU, high-risk AI rules begin phasing in from August 2026, while high-risk systems embedded in regulated products have an extended transition period until August 2, 2027. This makes regulatory planning a product design issue, not a late-stage documentation task.

When an AI-assisted decision contributes to a poor outcome, responsibility can be difficult to separate between the vendor, development partner, healthcare organization, and clinician. Contracts, consent forms, malpractice policies, and procurement requirements are catching up, but the legal framework still lags behind real-world AI adoption.

AI performance depends on the quality, structure, and availability of the data behind it. Benchling’s 2026 biotech AI report names poor data quality and availability as the leading reason AI pilots fail, with 55% of organizations citing it. For healthcare organizations, the practical lesson is clear: successful AI implementation depends on data engineering fundamentals as much as model sophistication.

The landscape of AI platforms available to health systems falls into four broad categories, each with different trade-offs on cost, compliance, and integration complexity.

Cloud-based platforms from hyperscalers (Google Health AI, Microsoft Azure Health Bot, AWS HealthLake) offer a breadth of capabilities but require significant internal engineering work to integrate with clinical workflows. EHR-integrated tools from Epic, Cerner, and Oracle Health bring convenience but constrain flexibility. Specialized vendors like Tempus, Aidoc, Nuance, and Abridge focus deeply on specific use cases. Custom-built solutions, developed with partners such as Glorium Technologies, enable health systems to build exactly what they need when off-the-shelf options do not fit.

The table below summarizes how these options compare across key evaluation criteria.

| Platform Category | Best Fit Use Case | Regulatory Complexity | Integration Effort | Typical Time to Value |

|---|---|---|---|---|

| Hyperscaler cloud platforms | Data-heavy analytics, population health | Moderate, depends on configuration | High, significant custom work | 6–12 months |

| EHR-integrated tools (Epic, Cerner) | Clinical documentation, predictive alerts | Low, vendor handles most | Low, native to workflow | 2–4 months |

| Specialized clinical AI vendors | Imaging, ambient scribing, triage | Moderate, FDA-cleared in most cases | Moderate, point integrations | 3–6 months |

| Custom-built solutions | Unique workflows, competitive differentiation | Variable, managed end-to-end | High, full build required | 4–9 months |

| Hybrid deployments | Data sovereignty plus cloud scale | High, multiple frameworks | High, multi-layer architecture | 6–12 months |

Off-the-shelf tools are sufficient for common scenarios like ambient scribing, standard imaging triage, and routine claims processing. Custom development becomes necessary when the workflow is unique, the compliance profile is unusual, or the organization wants to own the intellectual property outright. The right answer depends on organizational maturity, budget, and risk tolerance. Many health systems run hybrid architectures, keeping sensitive data governance on-premise while running AI processing in the cloud.

Several developments are worth tracking closely through the rest of 2026 and into 2027.

Federated learning achieved 94.8% diagnostic accuracy for gross tumor volume segmentation across 12 hospitals in 8 nations while preserving complete patient data privacy — a model for how multi-institutional AI collaboration can work without compromising HIPAA compliance. This matters because the next generation of clinical AI models will require training data from diverse patient populations, and federated architectures solve the privacy problem that has historically prevented such collaboration.

The AI drug discovery market is forecast to grow from $5-7 billion in 2025 to $8-10 billion in 2026, reflecting strong industry confidence in computational drug design. However, AI drug discovery promises warrant caution: early Phase I success does not translate directly to approved medicines, and historical pharmaceutical attrition claims approximately 90% of candidates regardless of discovery method. Chinese AI drug discovery companies increased their share of global biotech licensing deals from 21% in 2023-2024 to 32% in Q1 2025, reshaping the competitive landscape for healthcare AI globally.

Autonomous systems that plan and execute multi-step workflows, from prior authorization to patient outreach to revenue cycle tasks, are the newest category drawing serious investment. Job postings for agentic AI roles grew roughly tenfold between 2023 and 2024, according to McKinsey, which reflects how quickly companies are moving to build these capabilities.

Much of the current regulatory landscape is focused on standing up approval infrastructure rather than guiding organizations through the adaptive deployment transformation they face. For health systems evaluating AI implementation partners, this gap creates demand for compliance-experienced development teams who understand both the technical and regulatory terrain.

Healthcare AI is becoming core infrastructure, and the organizations that treat it that way, with disciplined medical data governance, clear clinical ownership, and compliance-aware architecture, are the ones producing measurable gains in patient outcomes and operating margins.

Glorium Technologies has spent more than 15 years building healthcare software for hospitals, digital health startups, diagnostic labs, and medical device manufacturers. Our work includes HIPAA-compliant patient platforms, EHR integrations, clinical decision support tools, and custom AI solutions for everything from remote monitoring to ambient documentation. We bring ISO 13485 and HIPAA experience to regulated engagements, which matters when a project has to pass an audit before it goes live.

Wondering how healthcare development looks in real life? Recently, Glorium Technologies worked with Turtle Health, a U.S.-based fertility testing company, to build a platform for at-home testing. The system supports the full patient flow, from intake forms and remote testing to clinician review and fertility reports. It also includes patient, healthcare provider, and admin portals, helping the company improve UX, keep data in one place, and double operational speed.

If you are evaluating your first healthcare AI project or scaling an existing one, the team at Glorium Technologies can help with discovery, build, compliance, and post-launch support. Contact us to talk through your project and get a realistic view of timeline, scope, and budget.

AI tools generally work alongside your existing EHR rather than replacing it. Most clinical AI products are designed to read from and write to systems like Epic, Cerner, or Athena through standard APIs and HL7 FHIR interfaces. Replacement is rare and usually makes sense only when the underlying EHR is near the end of its life. Glorium Technologies typically starts by mapping the existing data flows and integration points, then layers AI capabilities on top without disrupting the record of truth.

Four things matter more than general tech skill. First, direct healthcare domain experience, including HIPAA, ISO 13485, and ideally FDA pathway familiarity. Second, deep EHR integration capability, especially Epic and HL7 FHIR. Third, a track record of moving projects from discovery through compliance validation to production. Fourth, a discovery-first methodology that avoids building into an unclear scope. Ask for case studies, specific regulatory experience, and examples of past clinical stakeholder engagement.

Yes. Glorium Technologies works with hospitals, clinics, startups, and enterprise health systems at every stage of AI maturity. For teams just starting out, the engagement usually begins with a short discovery phase to define the most impactful use cases, assess data readiness, and map out the regulatory obligations. Starting small with one clearly scoped use case, validating results, and then expanding is the pattern that produces the most predictable ROI.

Yes. Many clients prefer to start with a scoped proof of concept or MVP before committing to a full build. A proof of concept typically runs 4 to 8 weeks and validates the core technical approach, data flow, and user experience against real clinical requirements. If the PoC hits its success metrics, the same team can move directly into full development without losing context. Glorium Technologies has MVP development services and PoC development engagements structured for exactly this kind of progression.

Timelines depend on scope, compliance profile, and data readiness, but rough ranges are useful. A focused proof of concept for a single use case usually runs 6 to 12 weeks. A full MVP with EHR integration and production-grade compliance typically takes 4 to 9 months. Enterprise deployments spanning multiple workflows and departments can stretch to 12 to 18 months. The single largest variable is data quality. Projects that start with clean, well-governed clinical data move two to three times faster than those that begin with fragmented records and inconsistent coding.

Glorium Technologies helps healthcare organizations, digital health companies, and healthcare institutions move from AI innovation to practical AI integration. Our team supports projects across electronic health records, medical records, clinical documentation, revenue cycle management, claims processing, prior authorization, patient engagement, and care delivery. We can help design secure AI systems, connect AI tools with hospital systems, structure clinical data and medical data, and build workflows where human oversight remains part of decision-making. This approach helps healthcare providers improve efficiency, reduce administrative burdens, and use healthcare AI without compromising patient safety or care quality.

Glorium Technologies can help build healthcare AI solutions for clinical decision support, medical imaging workflows, utilization management, treatment planning, patient records analysis, clinical trials support, drug discovery platforms, and AI agents for administrative or clinical workflows. We also work with machine learning models, large language models, foundation models, and AI-native architecture for companies that need more than disconnected point solutions. The goal is to turn unstructured data and clinical data into actionable insights, support health professionals in clinical settings, and help improve patient outcomes, health outcomes, and medical outcomes across the healthcare value chain.