AI in Banking Use Cases: How Financial Institutions Adopt AI in 2026

Banks have always been early adopters of technology. From ATM networks to internet banking to mobile apps, financial institutions have consistently put money into systems that cut friction and sharpen risk control. What’s happening right now, though, feels different. Artificial intelligence isn’t a single tool being rolled out in one department. It’s embedded across lending, compliance, customer service, and trading — and the institutions that started treating AI as core infrastructure a few years ago are now running well ahead of those still in pilot mode.

According to McKinsey’s 2024 Global Banking Annual Review, generative AI alone could add between $200 billion and $340 billion of annual value to the global banking industry, largely through productivity gains in knowledge-intensive functions. That figure isn’t speculative — it’s grounded in what’s already in production.

This article covers the AI in banking use cases generating measurable results in 2026: what they are, how they work, and what they actually require to implement. It’s written for CTOs, financial services leaders, and operations executives who are ready to move from strategy to execution.

“Banking is a trust-based business. If you lose trust, there is no bank.”

Sovan Shatpathy, VP of Product Strategy, Oracle Financial Services, Oracle CloudWorld 2025

Content

Banking has gone through several waves of technology adoption. Core systems modernization, internet banking, and mobile banking apps — each one changed how banks competed and how customers experienced financial services. The current wave is different because AI isn’t a new interface or channel. It’s integrated into the decisions, models, and workflows that run the institution itself.

The adoption numbers reflect this. A 2024 survey by the Bank for International Settlements found that more than 85% of central banks and supervisory authorities are either using or actively exploring AI applications. On the commercial side, PwC’s 2025 Financial Services Technology Report found that 72% of banking executives increased their AI investment year over year, with generative AI initiatives being the fastest-growing category across the financial services industry.

What’s changed most recently is the nature of the AI capabilities themselves. Most banking AI until a few years ago was predictive — advanced machine learning models trained on historical data to flag probable outcomes. Generative AI in banking adds a layer of synthesis on top of that. Large language models can now read a 200-page loan file, summarize it, flag inconsistencies, and draft an underwriter’s memo. That’s structured reasoning over unstructured financial data, at scale, done in seconds rather than hours.

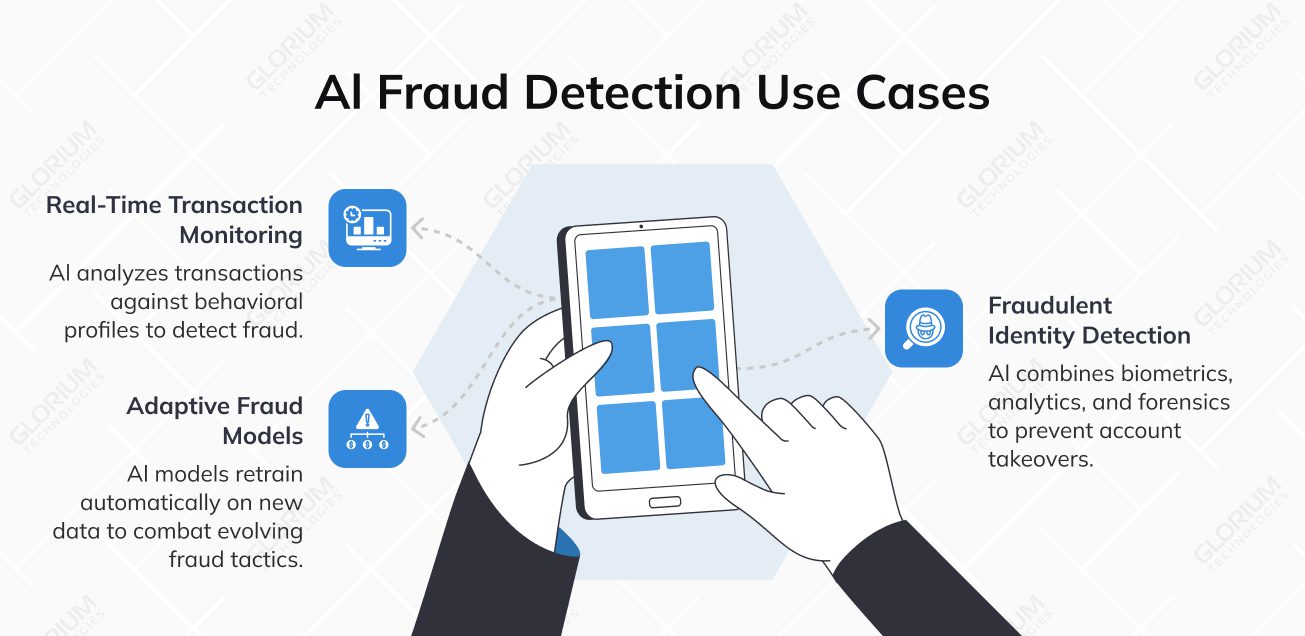

Real-time data processing has also matured. Modern AI systems can ingest streaming transaction data, cross-reference behavioral profiles, and return a risk score in under 50 milliseconds — fast enough to intervene before a payment clears. Combine that with increasingly specific regulatory expectations from bodies like the EBA, FinCEN, and the FCA, and 2026 looks like a genuinely pivotal year for banking AI implementation. Institutions that built their AI foundations in 2023 and 2024 are now scaling.

We believe there is a strong AI-enhanced version of nearly every banking function. And we have discovered that nearly every critical operation can be improved with AI in measurable ways. But AI transformations are still business transformations. They must drive real value for customers and improve outcomes for the institution.

The pressure to move is coming from three directions at once. Consumer fraud losses hit $12.5 billion in 2024, up 25% from the year before, and more than half of that fraud now involves AI on the attacker’s side. The institutions still relying on rule-based fraud detection are playing catch-up against adversaries who have already automated their methods. The competitive picture is just as stark: JPMorgan Chase runs over 500 AI use cases in production and reports $2 billion in annual benefits.

Regulatory pressure is adding a third layer. The EU AI Act classifies credit scoring as high-risk, with compliance required by August 2026, and the U.S. Treasury’s FS AI Risk Management Framework introduces 230 control objectives that are already shaping how regulators evaluate institutions, formal deadlines or not.

The range of AI applications across banking operations is wide, but not all of them are at the same level of maturity. Some use cases, fraud detection, and document processing, have been running at scale for years. Others, particularly those involving generative AI tools and agentic workflows, are newer and moving quickly. The sections below cover what’s generating the most measurable impact in 2026.

| Use case | Primary benefit | Maturity level |

| Fraud detection and prevention | Reduced fraud losses, real-time protection | High — widely deployed |

| Customer service and personalization | Improved customer satisfaction, lower support cost | High — standard in retail banking |

| Credit scoring and loan approvals | Faster decisioning, expanded credit access | High — production at scale |

| Regulatory compliance and AML | Lower compliance cost, fewer false positives | Medium-high — growing adoption |

| Trading and predictive analytics | Better risk visibility, faster execution | Medium-high — strong in capital markets |

| Process automation | Lower operational costs, fewer errors | Medium — accelerating adoption |

| Agentic AI and gen AI for internal ops | Productivity gains in knowledge work | Early — moving to production |

Customer-facing AI is one of the most visible areas of investment banking, and the gap between AI-powered and traditional service delivery keeps widening. The applications here span reactive support, proactive engagement, and personalized financial advice — all areas where customer behavior data determines the quality of the outcome.

AI chatbots and virtual assistants built on large language models handle complex, multi-turn conversations and hand off to human agents when needed. According to Juniper Research, AI-powered chatbots are projected to save the banking sector more than $7.3 billion annually by 2026 through reduced contact center workload — a direct improvement in both operational costs and customer satisfaction.

Personalized product recommendations let AI models analyze vast amounts of financial data — spending patterns, transaction history, life events inferred from behavioral signals — and surface relevant products at the right moment. It’s one of the clearest examples of how generative AI tools genuinely enhance customer interactions beyond what traditional campaigns can achieve.

Proactive customer engagement closes the loop: AI systems monitor customer behavior, flag accounts showing signs of financial stress or churn risk, and trigger outreach before an account deteriorates — risk management expressed through the customer engagement layer.

Fraud prevention is the most widely deployed AI use case in banking, and for good reason. 90% of financial institutions now use AI for this purpose, with two-thirds having integrated it within the past two years.

Credit scoring and loan approvals

A key consideration for any bank deploying AI for credit decisions is a governance model that can properly protect against bias and satisfy regulatory requirements. The stakes here are high: the EU AI Act now classifies credit scoring as high-risk AI, with mandatory transparency and full compliance required by August 2, 2026.

The bias risk is real, not theoretical. One study found female applicants received credit scores 6-8 points lower than their male counterparts due to algorithmic bias, making continuous testing essential rather than optional. This means institutions cannot treat bias auditing as a one-time pre-deployment check. It needs to be an ongoing operational process built into the model lifecycle.

Take the time to think through the full lifecycle of any AI credit scoring implementation and note potential costs upfront. It is not enough to assume that model development covers everything. Budget for continuous bias auditing, explainability requirements, and ongoing monitoring — these are not optional add-ons but core components of any compliant credit scoring AI deployment.

Just as institutions run periodic audits, manual transaction reviews, and scheduled KYC refreshes in a traditional compliance setup, all of these processes can be enhanced with AI — and at dramatically greater scale.

The results are substantial. AI-powered AML solutions reduce false positives by 90-95% compared to traditional rule-based systems, with agentic AI driving productivity gains of 200-2,000% in KYC workflows. HSBC demonstrates what this looks like at enterprise scale: across 62 jurisdictions with 600+ AI use cases, their Dynamic Risk Assessment platform detects 2-4x more suspicious activity while reducing false positives by 60%.

However, scaling from a successful experiment to enterprise-wide deployment remains formidable. We run into obstacles around securing funding, changing the behavior of thousands of compliance analysts, and integrating into legacy transaction monitoring systems. Governance structures are essential — institutions need clear processes to prioritize AI changes and determine whether they fall within the compliance program. The U.S. Treasury’s FS AI RMF, released in February 2026, provides 230 control objectives across seven domains. Though voluntary for now, it is already setting market expectations that function as soft regulation.

Capital markets and wealth management have been among the earliest adopters of AI in the financial services industry. Modern AI-driven trading systems incorporate real-time market microstructure signals, portfolio-level risk parameters, and intraday risk management within a single loop — creating measurable improvements in execution quality and risk-adjusted returns for investment banking operations.

Portfolio risk forecasting predictive models simultaneously assess market exposure, default probability, liquidity risk, and macroeconomic signals, giving wealth management and capital markets teams real-time visibility that wasn’t previously achievable. Sentiment analysis using NLP models has also become a standard input in investment decisions, scanning news feeds, earnings transcripts, and financial data to surface signals before they become consensus.

Back-office automation generates some of the highest ROI of any AI application in banking, even though it rarely gets the attention customer-facing innovations do. AI systems extract and validate data from loan applications, contracts, invoices, and regulatory forms with accuracy matching manual review — at a fraction of the cost. The same AI-driven process orchestration extends to reconciliation, accounting close cycles, and regulatory reporting, connecting financial data across systems and generating draft reports for human sign-off. McKinsey estimates the gains at a 20 to 30% reduction in operational costs in targeted banking functions — replacing repetitive tasks with structural savings that compound over time.

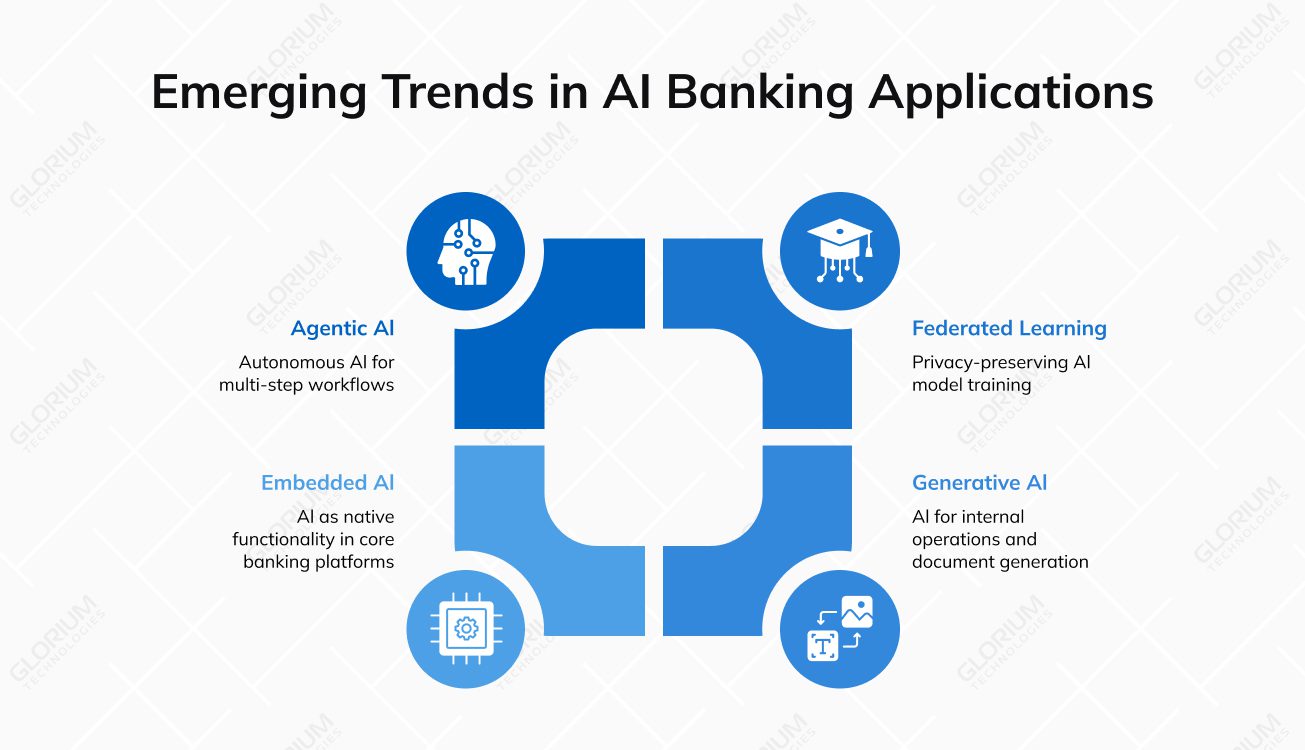

The use cases covered above are largely in production today. Two numbers set the context well: 44% of finance teams are expected to adopt agentic AI in 2026, a 600%+ increase from current levels, and 80% of banks plan to integrate autonomous AI agents into core operations, projecting 30% reductions in manual processing and compliance costs. Here’s what’s actually driving those numbers and what it means for institutions planning their AI roadmap.

Agentic AI is the most consequential of these trends. Autonomous AI systems that execute multi-step banking workflows without continuous human prompting are moving from research environments into early production. An agentic system built for loan processing doesn’t just score an application — it gathers supporting data, triggers verification steps, drafts a recommendation memo, and routes the file to the right decision-maker. Platforms like CogniAgent are enabling this kind of workflow orchestration across enterprise environments, connecting AI models to the specific banking systems and data sources they need to actually operate.

Beyond customer-facing applications, generative AI in banking has found strong traction inside internal operations — and this is often where institutions are seeing the fastest productivity gains, faster even than in customer-facing gen AI initiatives.

Gen AI tools are helping compliance teams draft regulatory documents from structured templates. Relationship managers get AI-generated summaries ahead of client meetings, pulling from proprietary data held across CRM systems and transaction records. Research teams use generative AI models to synthesize earnings reports and market data into investment memos that used to take hours of analyst time. Implementation risk is also lower than in customer-facing contexts because human review stays in the workflow throughout.

Banks also sit on enormous repositories of policy documents, past credit decisions, and compliance guidance that are hard to navigate manually. Large language models trained on proprietary data make that knowledge accessible through natural language queries — a good example of gen AI technology delivering value through better data processing rather than entirely new capabilities.

Federated learning is picking up as institutions look for ways to improve model quality without sharing sensitive customer data. Under a federated framework, multiple banks contribute to shared model training by submitting model updates rather than raw customer records — the result is a better-performing model that no single institution could have trained alone. Data privacy is preserved throughout, which matters increasingly under GDPR and equivalent regulations.

Embedded AI represents a longer-term structural shift. AI is moving from add-on tools layered over existing banking systems to native functionality within the platforms themselves. Institutions with clean data architectures and modern integration layers will be best positioned to take advantage of this as core banking vendors continue deepening their AI capabilities — accessing these new features without rebuilding their entire infrastructure is one of the central practical challenges of the next phase of banking AI.

Turning an AI strategy into a production-ready banking product requires deep technical capability and a practical understanding of how regulated financial environments actually work. Glorium Technologies has been building software for regulated industries for over 15 years, with a team experienced in AI engineering, compliance-ready infrastructure, and the integration realities that come with established banking systems and legacy architecture.

The work covers the full AI development lifecycle: early-stage discovery and architecture design, deployment, model monitoring, and ongoing governance. For banking specifically, that means experience building fraud-detection pipelines, document intelligence for loan processing, AML monitoring enhancements, and explainable AI systems that hold up under regulatory scrutiny. Glorium Technologies’ AI software development and custom fintech AI development services are built around these requirements, including ISO 27001-certified infrastructure and teams experienced with GDPR and financial data compliance standards.

Contact Glorium Technologies to discuss how we can help you build and scale AI banking implementations with a governance-first approach.

Start at the intersection of business impact and data readiness. Fraud detection and document automation tend to be strong first choices because the data they require — transaction history, document archives — is already structured in most institutions. Identify where repetitive tasks and manual processes are creating the most cost or risk exposure, then validate that you have sufficient clean historical data to train and evaluate a model. A short discovery phase with a technical partner will compress this decision significantly.

A targeted banking AI solution, like a fraud detection module or an AI-powered document processing workflow that connects into your existing transaction system, can realistically be in production within 10 to 16 weeks. That’s assuming your data is reasonably clean, and the integration with existing banking systems isn’t a nightmare.

Where timelines stretch is when you’re dealing with complex AI systems that span multiple banking operations, need custom model training on proprietary data, or require serious work to get talking to legacy systems. In those situations, 4 to 9 months is a more honest estimate. What tends to work best is picking one well-defined use case, getting it live, learning from it, and building outward, rather than trying to tackle too many banking AI priorities at once.

Regulatory compliance for AI in banking touches a lot of areas at once: data privacy rules like GDPR and CCPA, explainability requirements for credit scoring and credit risk assessment models, audit trail obligations for AML and KYC processes, and broader operational resilience standards that regulators in banking and financial sectors are increasingly focused on.

The banks that tend to get this right are the ones that build compliance into their AI architecture from day one, rather than trying to layer it on after deployment. That means explainable banking generative AI models where required, proper audit logging on every AI-assisted decision, and monitoring systems that catch model drift before it becomes a regulatory problem. Sensitive customer data and transaction history need to be handled carefully at every stage.

In most cases, yes. Though how smooth that process is depends on what your core system actually looks like. Modern core banking platforms expose APIs that make connecting gen AI solutions and advanced machine learning models relatively manageable. Older legacy systems will likely need middleware or custom integration layers to bridge the gap between existing banking infrastructure and newer AI capabilities. Either way, it’s a solved problem, yet the question is how much engineering work it takes to get there.

The principle that serves banks best is keeping the banking AI layer loosely coupled from the core system. Using APIs and event streams rather than direct database connections protects operational efficiency and system stability, and it gives you the flexibility to introduce new AI tools, whether that’s AI chatbots for customer interactions, generative artificial intelligence tools for internal operations, or fraud detection and credit scoring models without destabilizing the systems your daily banking operations depend on. It also makes it considerably easier to handle sensitive customer data and financial data in line with data privacy and regulatory compliance requirements across various banking sectors.

The minimum requirement is clean, labeled, accessible historical data relevant to the use case you’re targeting. For fraud detection, that means transaction records with confirmed fraud labels. For credit scoring, it means application data paired with repayment outcomes. For document processing, it means a representative sample of the document types you want to automate. The most common obstacle isn’t that banks lack data but that it sits in siloed systems or arrives in inconsistent formats. A data readiness assessment at the start of any AI project saves significant time and cost downstream.