AI Recommendations That Pay Off: A Practical Guide to Building the Engine

Recommender systems powered by machine learning turn “too much choice” into revenue. They predict what a user is likely to want next, then surface the best product, content, or offer in that moment. That’s why the broader recommendation engines market is projected to grow from $10.57 billion in 2025 to $131.15 billion by 2033. AI-specific markets are also accelerating, with forecasts reaching $34.4 billion by 2033, cloud-based deployments already accounting for ~68.5% of implementations.

McKinsey found that companies that grow faster drive 40% more revenue from personalization than their slower-growing competitors. Put simply, a solid artificial intelligence recommendation engine boosts conversion rates, reduces cart abandonment, and lowers churn by helping users find value faster.

In this guide, we’ll break down what an AI-based recommendation system actually does, how it works, and what “efficient” looks like in architecture, data, and rollout. You’ll also learn from the real-world cases of leaders across industries, and see how Glorium Technologies can help you design, build, and scale AI-based recommendation systems that pay off.

Content

A recommendation system (also called an AI recommendation engine or AI recommender system) predicts what a user will want is most likely to purchase or engage with, based on their browsing history, past purchases, and other behavior signals. The system analyzes how users interact with your catalog, gathering data from clicks, product views, add-to-cart events, purchases, searches, and dwell time. It then ranks items so the shopper sees the most relevant options for that moment in the journey.

In short, a recommendation system is a machine learning approach that uses data to predict and narrow down what people are looking for among many options.

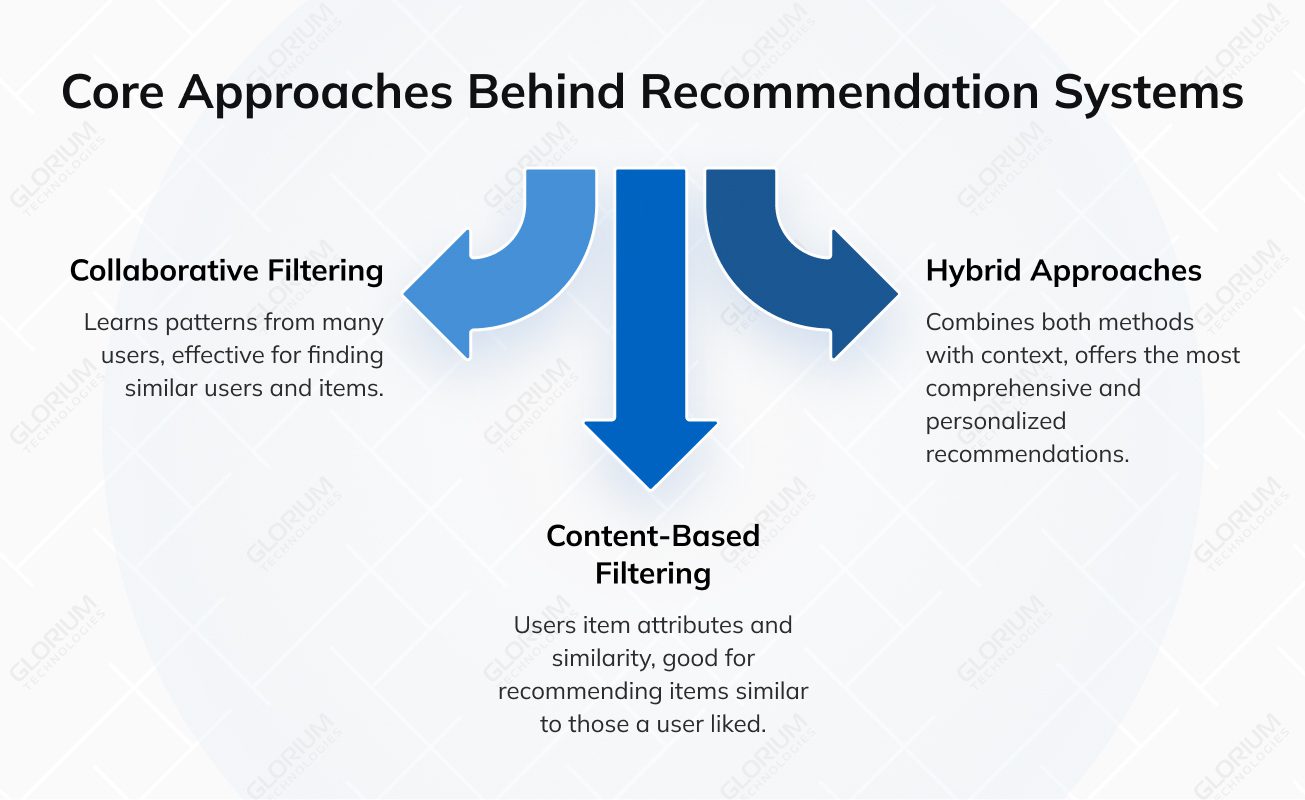

In practice, a recommendation algorithm typically blends several approaches. The most common ones are:

Artificial intelligence recommendations move users faster with less friction, but they also change the company’s day-to-day significantly:

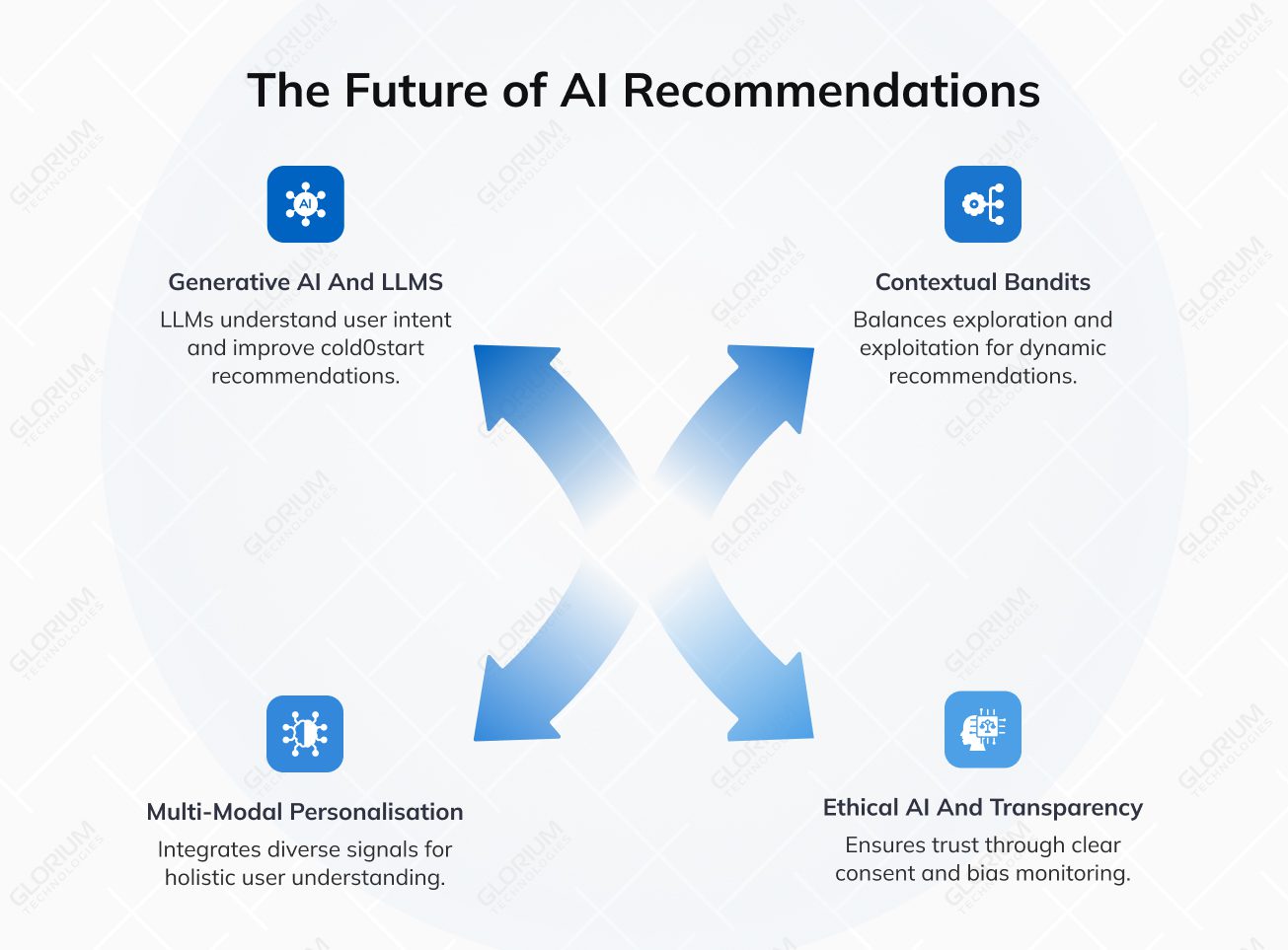

AI systems are shifting from “better rankings” to full experience orchestration. In 2026, the strongest teams build recommendation layers that understand intent, react in real time, and stay consistent across channels, while meeting tougher privacy and transparency expectations. Here are the trends shaping that shift.

LLMs are changing how AI engines understand users and items. Instead of relying only on clicks and tags, teams use LLMs to interpret intent from queries, reviews, chat, and support tickets, making recommendations feel closer to a guided consultation than a carousel.

LLMs also improve cold-start cases. When new users, new items, or sparse history break classic models, LLM-assisted approaches can infer preferences from minimal context and generate richer item representations. In practical terms, that means stronger AI-based product recommendation performance for new catalogs, new markets, and long-tail inventory.

Many products no longer treat recommendations as a static ranking problem. They treat them as a continuous decision loop: choose an option, observe feedback, learn, and adjust. That’s where contextual bandits and reinforcement learning come in.

A contextual bandit strategy helps an AI system balance exploration and exploitation. It can keep serving proven winners while still testing new items, creatives, or placements to avoid stagnation. This approach is especially useful when user intent changes quickly, when inventory rotates often, or when your business needs controlled experimentation without running endless A/B tests.

Users don’t live in one interface anymore. They browse on mobile, compare on desktop, purchase via email, and ask questions in chat. In 2026, winning AI recommender systems work across surfaces and keep context consistent.

Multi-modal systems push this further by combining signals from text, images, audio, video, and structured metadata. For retail, that means “style” and “compatibility” become first-class signals. For media, it means understanding content beyond tags. For SaaS, it means recommending workflows, features, and help content based on behavior patterns.

As AI personalization becomes more powerful, trust becomes part of performance. Buyers now ask how recommendations are formed, what data is used, and whether the system is fair. That pressure comes from both customers and regulators.

In practice, ethical AI means clear consent and data minimization, bias monitoring, and controls for sensitive attributes. It also means explainability at the right level: not revealing the full model, but giving users and internal stakeholders a clear reason why something is recommended. In 2026, “transparent enough to trust” is a product requirement.

Strong recommendation systems improve how users find products and content inside your experience. They shorten the path from “browse” to “watch/buy/read,” and they increase metrics like conversion, average order value, watch time, and retention. The examples below show what this looks like at scale.

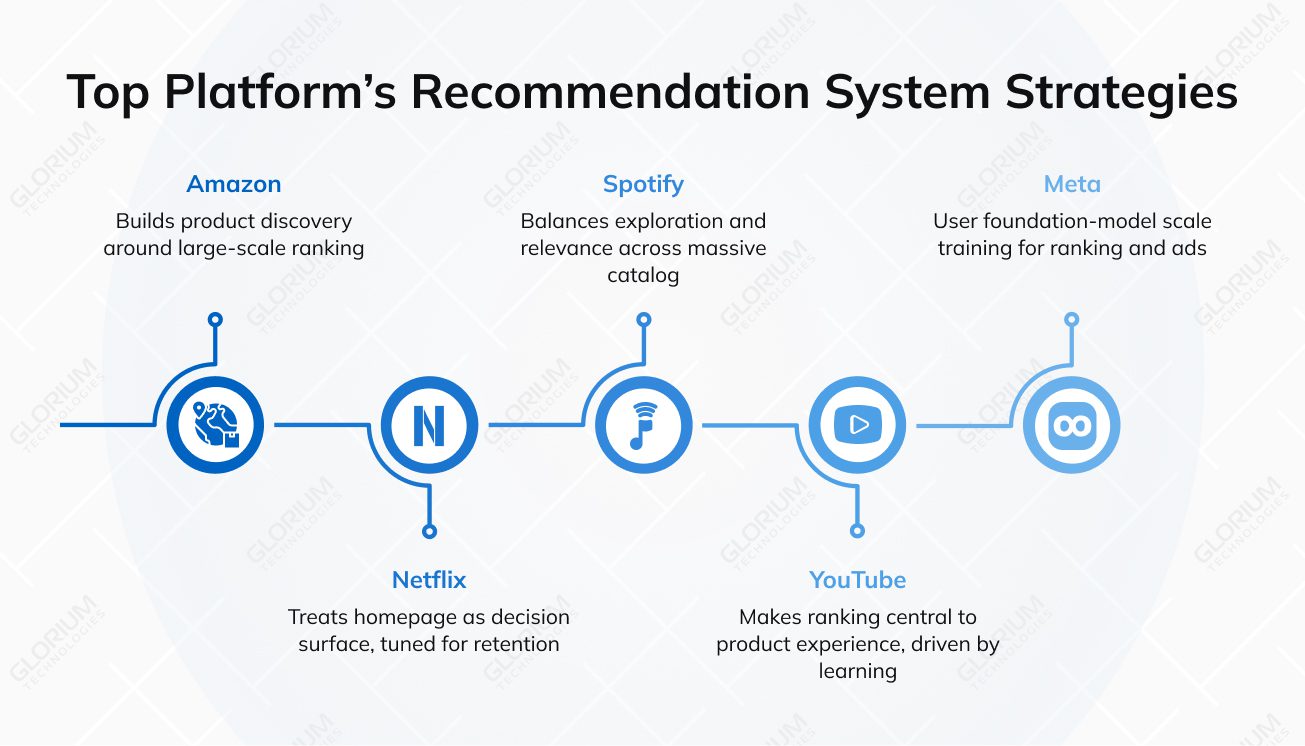

Amazon built product discovery around large-scale ranking, where collaborative filtering and related recommendation algorithms continuously learn from browsing and purchase signals.

More recently, Rufus, Amazon AI shopping assistant, shows how a conversational layer can sit on top of an existing engine. Fortune reports Amazon’s estimate of $10 billion in annual incremental sales, and notes internal planning that projected $700 million+ in indirect operating profit contribution in 2025.

Netflix treats the homepage as the main “decision surface,” with ranking tuned for retention and long-term engagement. Recent research shows that 80% of what people watch on Netflix comes from recommendations. In Netflix’s own write-up, the company states that 2 out of every 3 hours streamed come from recommendations surfaced on the homepage.

At Spotify’s scale, the difficult part is balancing exploration with relevance across a massive catalog, using signals from user behavior data and user feedback to serve the next best option. In its Q3 2025 filing, Spotify reported 713M monthly active users, a reminder of how big the ranking problem becomes in production. In 2025, Spotify added a new distribution surface with “Spotify in ChatGPT,” bringing recommendations into conversational flows (live in English across 145 countries at launch)

YouTube made ranking central to product experience, with the feed driven by continuous learning from search history, watch patterns, and other interaction signals. YouTube leadership reports that about 70% of watch time is driven by recommendations.

Meta’s work shows where AI-powered recommendation systems are heading: foundation-model scale training for ranking and ads, plus aggressive infrastructure optimization. In Meta’s Engineering write-up, GEM is reported to deliver a 5% lift in ad conversions on Instagram and 3% on Facebook Feed, alongside a 23× increase in effective training FLOPs and 1.43× improvement in model FLOPs utilization.

An AI-based recommendation becomes necessary when growth starts hitting friction you can’t fix with campaigns, UX tweaks, or more traffic. If conversion, average order value, retention, or engagement plateaus while your catalog and content keep expanding, recommendations stop being a “nice-to-have” and become infrastructure. They let you turn existing customer data into relevant recommendations at scale, so discovery and cross-sell don’t depend on manual merchandising or static segments.

Strong signals you need recommender systems:

A simple rule: if the product needs guidance, a strong recommendation engine pays off, especially when it can learn from user-item interactions and match each particular user to the best next step.

Most AI-powered recommendation systems rely on multiple data sources and consistent data flows.

If several items above are missing, start with the data foundations first. Even the best AI algorithms and recommendation algorithms can’t compensate for missing signals, noisy tracking, or an inconsistent catalog.

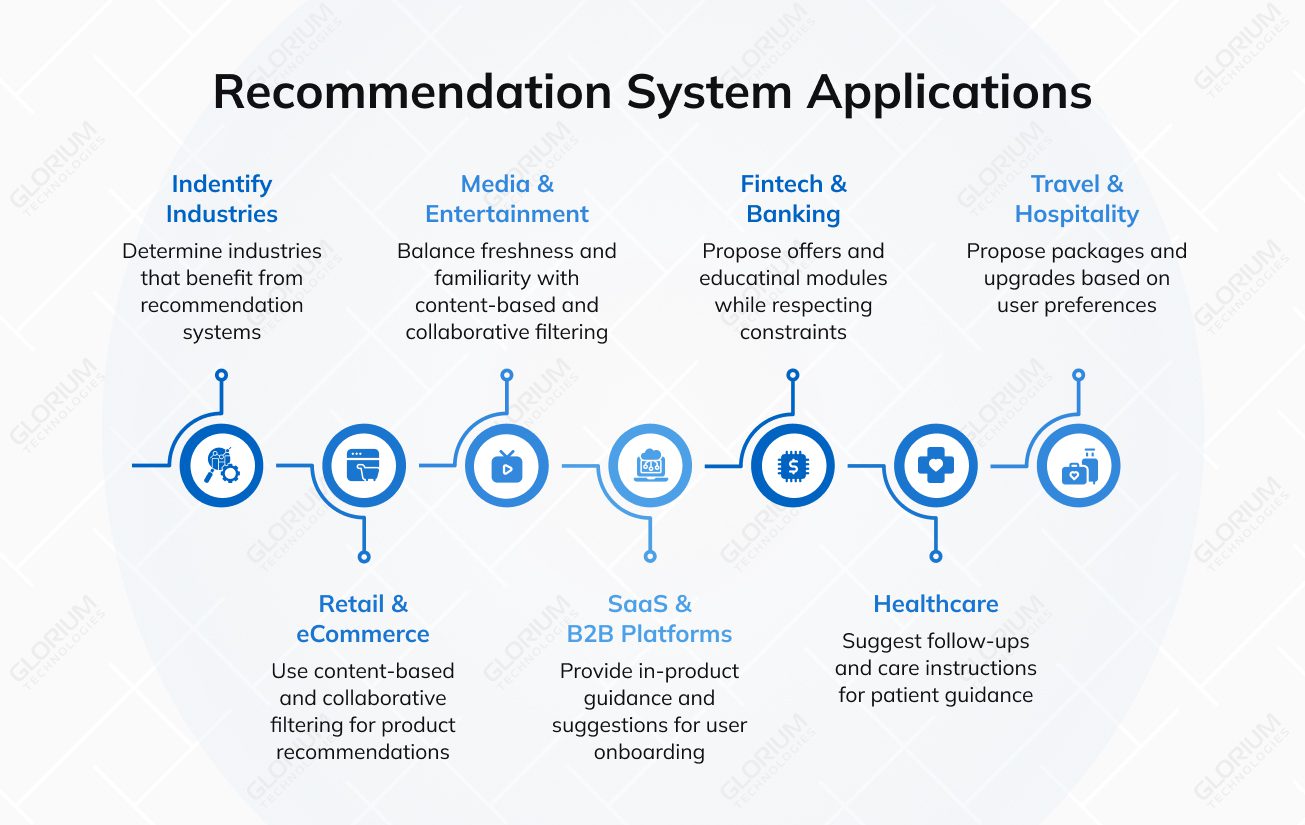

Any business with a large catalog, frequent repeat sessions, or complex decisions can benefit from recommendation systems. The biggest wins come when the recommendation engine influences the next step across web, mobile, email, and in-app journeys. That’s where AI-powered recommendation systems turn scattered clicks into a consistent customer experience, using machine learning algorithms to learn user preferences from user behavior data and user feedback.

On e-commerce platforms, shoppers often get stuck in filters once catalogs grow into thousands of SKUs. A recommendation engine can use content-based filtering (attributes such as size, style, and compatibility) and collaborative filtering (patterns from other users) to propose bundles, substitutes in stock, and “complete the look” sets for a particular user. When it uses purchase history, past purchases, browsing history, and search queries as implicit data, the system delivers relevant recommendations that lift average order value and reduce abandoned sessions.

In the media industry, catalog depth is both an advantage and a discovery problem. Recommender systems help deliver relevant content by learning from user behavior (plays, skips, watch time) and search history, then balancing freshness with familiarity. By combining content-based filtering methods with collaborative filtering, platforms can surface long-tail titles and maintain high user engagement without repeating the same hits.

Complex SaaS products often have a “hidden value” problem: users don’t discover the right feature at the right time. In this context, recommendation systems act as a navigation layer inside the app. They use customer data and user–item interactions, for example, which pages a user opened, which buttons they clicked, which steps they completed, and where they dropped off, to trigger specific prompts. The system can recommend a relevant template, the next workflow step, or the most useful help article for that role and stage. This speeds up time-to-value, reduces repetitive support questions, and improves retention.

Financial and banking journeys come with constraints: eligibility, risk, and compliance rules. Recommendation algorithms can still be effective when they respect these constraints and use data filtering to avoid irrelevant or risky outputs. By combining explicit data (user goals, user ratings where applicable) with implicit data (product views, search queries, user behavior), platforms can propose offers and educational modules that fit the particular user and improve customer satisfaction without relying on broad segments.

In patient portals and digital health apps, the goal is guidance and adherence. For the healthcare industry, ecommender systems can suggest follow-ups, care instructions, or direct users to the right service line based on user behavior data and data collected during interactions. When teams analyze user data from appointments, content views, and reminders, the system can suggest relevant content that supports engagement and better outcomes, while keeping the experience simple and respectful.

Travel decisions vary widely by budget, timing, and style. A recommendation engine for the travel and hospitality industry can combine multiple data sources, search history, browsing history, and purchase history to propose packages, upgrades, and add-ons that match user preferences. When teams process data consistently and enrich catalog metadata, AI-powered ranking reduces the need for repeated searches and supports a smoother booking flow.

Note: When a business has limited historical data, a practical starting point is content-based filtering plus lightweight collaborative filtering. As more data accumulates, you can expand into hybrid recommendation systems that use a richer user-item matrix and stronger online evaluation.

ROI is easier to achieve when the recommendation work is anchored to specific decision points, backed by clean signals, and validated through structured experimentation rather than broad rollouts.

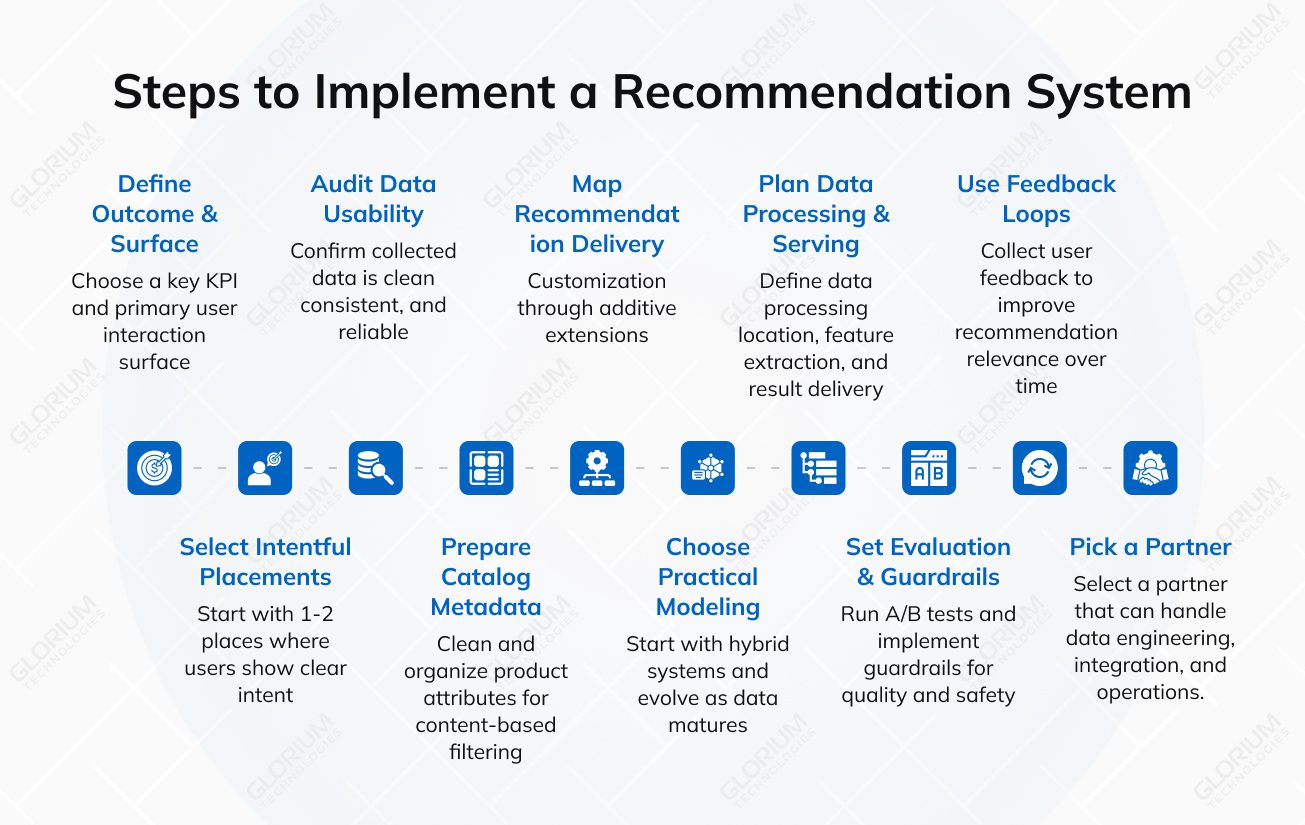

Pick one KPI and one primary surface first. Examples: PDP cross-sell to grow average order value, search-to-product journeys to reduce bounce, or a “next item” feed to increase repeat visits. This keeps the customer experience measurable and prevents “generic personalization” that nobody owns.

Start with 1–2 product touchpoints where intent is already high, such as category pages, search results, product detail pages (PDP), cart, or the in-app feed. These touchpoints generate rich user–item interaction signals early, so you can move from rule-based suggestions to relevant recommendations faster.

Before modeling, confirm that the collected data actually reflect behavior. You need consistent event names, stable IDs, and reliable timestamps. Your core data sets usually include:

This is mostly behavioral data and implicit data, and it’s what makes the system learn without asking users for too much.

A recommendation layer can’t rank what it can’t understand. Clean attributes, taxonomy, pricing, availability, and content metadata are the baseline for content-based filtering methods. If you have limited historical data (new items or a new market), strong metadata is what protects early performance.

Map where personalized recommendations show up: web modules, in-app feeds, email blocks, or push triggers. Then define what “good” means for each surface: diversity, freshness, stock constraints, frequency caps, and what must never be shown. This is how you turn ranking into personalized customer experiences.

For most teams, the winning starting point is hybrid systems:

As data matures, move to hybrid recommendation systems (and, when needed, hybrid recommender systems) that leverage multiple data sources, events, catalog, context, and text, so the engine can consistently suggest relevant content.

If your product has sufficient density, add user-based or item-based collaborative filtering to improve discovery beyond obvious similarities. Over time, combining collaborative filtering with content signals usually produces the most stable lift in real-world catalogs.

Define where you process data (stream, warehouse, CDP), how the engine pulls features, and how results get served (API, SDK, batch). Production AI-powered recommendation systems need clear latency targets, caching, and monitoring to keep the experience fast and reliable.

Run A/B tests or controlled rollouts and include guardrails: latency, error rate, coverage, and relevance checks. Add data filtering rules for out-of-stock items, duplicates, restricted content, and extreme repetition to keep results reliable as you iterate and optimize.

Collect lightweight user ratings or “not interested” signals where it makes sense. These signals help the engine learn faster, generate better-tailored suggestions, and avoid showing the same items repeatedly. The goal is to provide more useful personalized suggestions.

Look for a team that can handle data engineering, integration, and production operations. In practice, success depends on wiring the engine into real journeys, keeping data clean, and iterating the recommendation process as you collect more data and behavior shifts.

Building an AI-based recommendation system that truly improves conversion, AOV, and retention is much easier with an experienced partner like Glorium Technologies. We bring 15+ years of software development and digital transformation experience, plus hands-on expertise in data engineering, machine learning, and production-grade AI solutions.

Want to see how this translates into real delivery? Here are two relevant examples:

Contact us to plan your recommendation strategy, validate data readiness, and launch a product that feels relevant, works in real time, and moves your core metrics.

Start with two core data sets: customer data signals (how users interact) and a clean catalog (what you recommend). On the user side, collect user behavior data, including browsing history, purchase history, past purchases, search history, search queries, clicks, saves, skips, and other user-item interactions. Add explicit data when you have it (preferences, user ratings, surveys) and rely heavily on implicit data (views, dwell time, add-to-cart) because that’s where most volume comes from. On the item side, ensure each product or asset has stable IDs, attributes, and taxonomy so the recommendation engine can match a particular user to the right candidates and consistently suggest relevant content.

If your history is thin or you’re dealing with limited historical data, you can still launch by combining content-based filtering with lightweight collaborative filtering. Over time, you’ll build a stronger user item matrix and expand to hybrid systems that use multiple data sources (events, catalog, text, context) to produce personalized recommendations.

Timelines depend on data readiness and how many product touchpoints you want to cover (for example, search results, category pages, PDP, cart, or an in-app feed). In many projects, the first 2–4 weeks go into data audit and tracking fixes, plus identity alignment and catalog cleanup if needed. A focused MVP for 1–2 touchpoints typically takes about 4–8 weeks, including the data pipeline, initial models (often collaborative filtering + content-based filtering), and integration. Production hardening and expansion to additional touchpoints usually brings the total to 8–12 weeks. If you need real-time pipelines, multi-channel delivery, or more complex ranking constraints, the timeline often extends to 12–20+ weeks.

If you want a more precise estimate for your product, book a short intro call and we’ll map scope, data readiness, and a realistic rollout plan.

Bias usually comes from the data collected and exposure loops: what gets shown gets clicked, and what gets clicked gets shown more. Start by auditing whether certain categories, creators, or suppliers are under-exposed. Then add guardrails in ranking: diversity rules, frequency caps, and business constraints. This is also where user feedback matters, both explicit signals (thumbs up/down) and implicit ones (skips, short dwell).

From a modeling perspective, evaluate outcomes by segment rather than just global averages. For AI-powered recommendation systems, keep monitoring in production: conduct drift checks, use fairness metrics, and regularly review edge cases. The goal is simple: maintain relevance while protecting the customer experience, customer satisfaction, and long-term user engagement.

Retraining depends on how fast user behavior and the catalog change. High-velocity retail, media, and marketplace environments need more frequent refreshes because new items, price changes, and seasonal demand shifts quickly. Slower catalogs can retrain less often. The best trigger is performance: if CTR, conversion, or coverage drops, retrain sooner.

Many teams retrain embeddings or item representations more often than full models. As you collect more data and expand the signals, schedule retraining to align with your release cadence and operational capacity.

Yes. Glorium Technologies can support the full delivery cycle for AI recommender systems: defining use cases, analyzing user data, designing the data pipeline, selecting the right machine learning algorithms, and integrating a production-ready recommendation engine into your product surfaces. We also help you choose the right approach, collaborative filtering systems, content-based filtering methods, or hybrid recommendation systems, based on your catalog shape, traffic patterns, and constraints.

If your goal is measurable lift (conversion, average order value, retention), we focus on the end-to-end system: model quality, serving latency, business rules, and experimentation. We bring hands-on experience from real projects, including a predictive scheduling assistant that helps reduce no-shows in healthcare and a computer-vision platform that supports valuation and pricing insights in real estate. To discuss your use case, reach out to us.

The partner choice depends on whether you need speed, differentiation, or deep control. If you want a fast rollout, managed tools and platforms can jump-start recommendation systems with standard pipelines. If recommendations are a product differentiator, a custom partner is usually the better fit because you can tailor the recommendation engine, ranking constraints, and integration across channels.

When evaluating partners, look for proven experience in machine learning, data engineering, and production operations. A strong team should cover instrumentation, data filtering, model training, online serving, monitoring, and ongoing optimization as user preferences and user behavior evolve.

Glorium Technologies is a good fit when you need an end-to-end AI delivery partner for recommender systems, from data readiness and architecture to production deployment and measurable KPI lift, while keeping the build aligned with your business rules, compliance needs, and product roadmap. If you’d like to sanity-check your data readiness or map a realistic rollout plan, contact us.

AI-powered recommendation systems use machine learning and modern machine learning algorithms to learn from how users interact with your product. The recommendation engine trains on datasets built from user-item interactions, clicks, saves, skips, browsing history, search history, search queries, and purchase history (including past purchases), which are mostly implicit data. When available, the system also uses explicit data, such as preferences, user ratings, and direct user feedback, to refine relevance more quickly.

Most production recommendation systems combine content-based filtering with collaborative filtering systems. Content signals help match items to intent, while a collaborative filtering approach learns from patterns across other users and similar users using a user-item matrix. The result is more consistent, relevant recommendations, stronger user engagement, and a better customer experience, which is what typically drives higher average order value and improved customer satisfaction over time.